Group work to get acquainted with Matlab is OK, and asking and answering general questions about how things work is OK. Otherwise this is an individual project.

Turn in (to Blackboard) your code, README, and PDF (not .doc or anything else) writeup. Don't use rar, either, please, as always.

As usual the writeup should have plenty of annotated output to convince us your code runs and to demonstrate its power, range, limitations, cutenesses, etc. Also your writeup as always should explain exactly what problem you're solving, what goes beyond the assignment, what experiences you had that you think we should know about, etc.

Get acquainted with the course ``textbook'' refs, the course pages, tutorials, e-references, reserve books, whatever resources you are going to use to learn and use Matlab. Get onto Matlab and start typing: especially check out the on-command-line help feature.

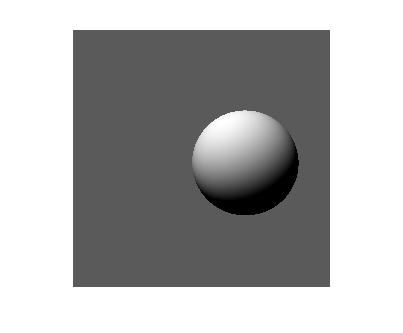

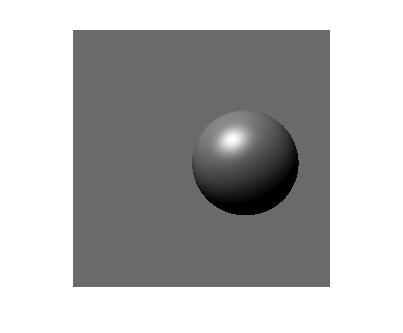

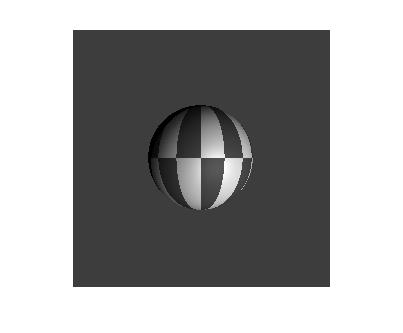

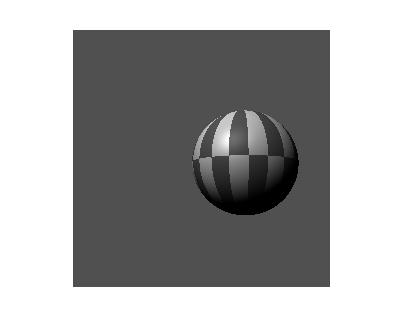

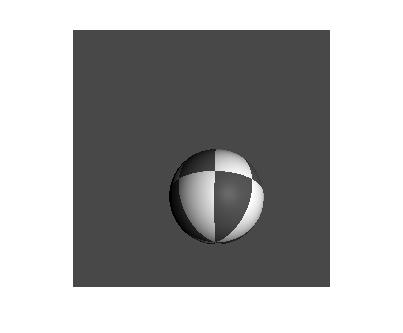

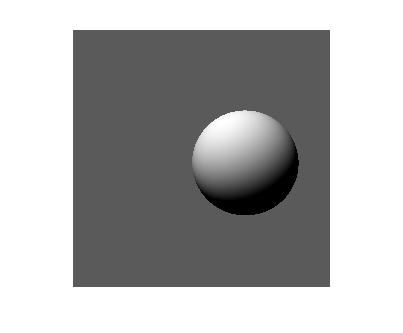

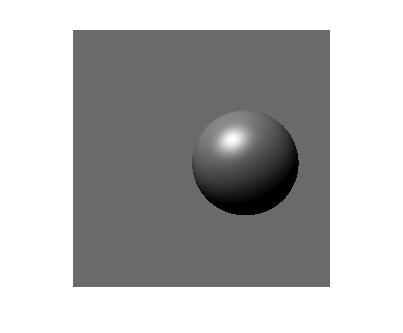

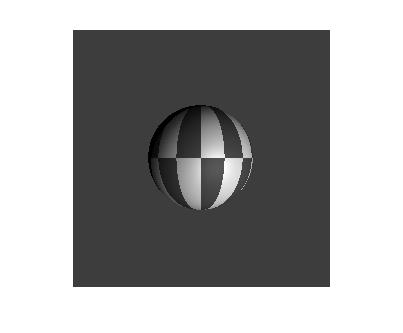

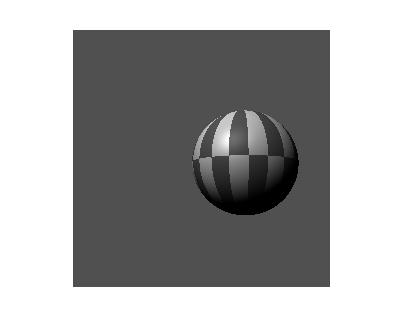

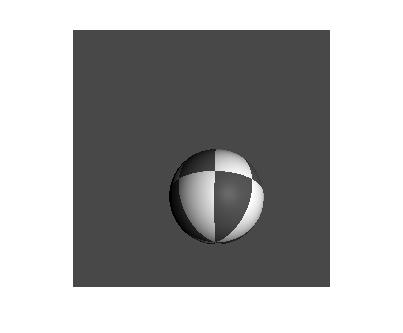

Your goal is to write a raytracer that can produce images like the following (well, probably better): these are "saved-as" .jpg directly from Matlab after >>imshow(image_array);

You get the idea. There's this greyscale plastic-looking beachball with controllable appearance parameters like how many stripes, how mirror-like is its surface, etc. There's a light source and a movable, variable-resolution camera.

Understand this, though. Your goal is not just to make images. The goal is to write a raytracer. Believe it or not, I don't care if I never see another image of a ping-pong ball again (yep, even yours, sorry).

In short and as usual the results are not the point: the process is the point. Matlab has all sorts of functions to produce nicely rendered graphics, manipulate the camera, solve equations, etc. For example: campan, camorbit, light, roots . If you really get interested in Matlab there's extra credit for you if you want to pursue the minimal-effort solution to displaying a patterned sphere (see below). BUT extra credit implies you have some to start with, and you won't get any credit-credit for using the functions listed above or or any like them. To repeat: the output is not the point of this assignment. The point is to formalize the physics of image formation and to implement it yourself. Not getting this point means not getting grade points. Still, if you want to exercise Matlab functionality, extra credit and an honor roll awaits you...

You probably should also look at More Math. Details for this assignment. I might use upper and lower case variously for vectors (points in 2 or 3D), coordinates or vector components, and axes. The semantics should be clear from context.

Coordinates: Most coordinates are physical 3-D ones in the LAB system, whose X axis is up, Y is right, and Z is away from you (into image, or out along the camera's line of sight). 2-D image coordinates are just the indices of pixels in a physical, "retinal" array of detectors.

Directions: There are a few directions to keep straight: I represent most of them as unit vectors, all expressed in LAB. Normal vector at a point on the surface of viewed object (for me a sphere), direction of the light source, direction of the viewpoint as seen from a point on the object surface, direction from viewpoint through detector (imaging) point on retina.

My model of light was a "point source at infinity", so there is only one direction to the light source. See extra credit if you want to move beyond this.

Camera, Tracking, Panning, and Tilting: We want to translate the camera in 3-D and rotate it. I implemented 3-D translation and panning and tilting (rotation about vertical (head- shaking) and left-right (head-nodding) axes.)

I put the original (before any camera motion) viewpoint at the origin of LAB [0,0,0], and had a focal length of 10 (millimeters, say, but units are arbitrary for us). With the viewing direction out along the Z axis, the retina is in the z=foccallen plane. The retina (or CCD array) is a 2-d array of 3-D imaging or detector points (all in a "retinal plane", of course) through which rays are projected from the viewpoint out into the world and where computed brightnesses out in the world are 'captured' to produce pixels on the image. This is the 'pinhole camera' model: no depth of field effects for instance, just pure projective projection. I arbitrarily used a physical retinal size of 10 mm, and various image resolutions, or numbers of image pixels; sizes of about 100 to 256 pixels square are shown in the images above. The (x,y,z) retina point corresponding to the pixel at (i,j) is basically (i*k, j*k, focallen), where k is a constant converting pixels on the image to millimeters on the retina. But: if you want a non-square aspect ratio (non-uniform resolution in x and y) you'll need two ratios, AND I like to have the viewing direction pierce the center of the image (you don't have to), so I also subtracted half the image size from x and y.

If the image array is N x N, my retina is N x N x 3. That is, I used a 5-D array for the retina, (a 2-D array of 3-D image points), but there's doubtless a better way, say with structures.

Your retina arrray models a physical one that you can rotate (pan and tilt) around the origin of LAB (also that's the original viewpoint), using 3-D rotation matrices around X and Y axes. Then you can translate it in (X,Y,Z) by choosing a new 3-D viewpoint (vector) and adding it to the original viewpoint and to the (rotated) elements of the retina. At this point your image-making code should work as before, but from a new camera position.

Now if you want to move the camera and THEN rotate it, or do a sequence of tracks and pans and tilts, you need a little more CoordSys-hacking technique than I want to get into now.

Things can be confusing since the coordinates Matlab uses to display may not be aimed or even named right, pan and tilt may not work the way you think, etc. (There ARE ways to tell Matlab to use different display coordinates, though: see axis properties in the graphics commands, esp. XDir, YDir, Zdir.) Use small rotations at first. To keep your target in view, it may help to recall the definition of a radian.

Ray-Casting: I stuck to the absolute simplest geometrical

figure for my domain: the sphere. If you want to do a spheroid, cube,

tetrahedron, cylinder, go for it or we can talk to get you started.

We need to project a ray from the viewpoint V out through the 3-D

point X, the location of a pixel (image-coordinate, retinal point)

representing the 2-D image pixel at (row, column) in the image (which

has maybe been rotatated and translated to be anywhere in space). The line from

V through X intersects the sphere (or not). Work it out or try

something like

Line-Sphere

Intersection . I think this is a slightly confusing article, with

x being used two ways and I think mislabeled the 2nd time. I think

"origin of line" should be "a point on the line", since we know the

origin is (0,0,0). You actually

want the point on the sphere that the ray intersects, so in terms of

the Wiki. article you need to compute the point(s)

The reason the sphere is the simplest shape for us to use is its continuous surface (not six different finite faces as for a cube) and the fact that if it is considered to be at the origin and of unit radius, a point on it is also the unit normal vector at that point, which is just what we need for computing image brightness.

Reflectance Geometry: For a Lambertian (matte, non-specular) surface, which you may want to try first, the observed brightness looks like (n-vec ⋅ l-vec)*a. That is the dot product of the surface normal and the light-source direction unit vectors, multiplied by the albedo of the surface at that point. For me the albedo is determined by the paint job I gave the beach ball with those little segments. I started out with it constant for debugging. I just set the albedo to one of two values depending on values of two of the components (say x and y, but I forget) of the (x,y,z) point on the sphere (or better, normalize so you get unit vectors and can predict the ranges of the components will all be between 0 and 1.)

For a surface with both Lambertian and specular reflection (like most), I used the following, which is formally known as a "phenomenological model", or complete hack. (For a more up-to-date approach, see any graphics book).

Assume we know for a point on the sphere:

l-vec,

the direction (unit vector)

out of the surface pointing in the light-direction. NOTE: with

our point source at infinity, this is still the same single

light-direction

we always use: it's the same everywhere.

n-vec, the surface normal

v-vec, the direction to the camera origin (focal point) from the point

on the sphere.

These vectors determine some angles between vectors called i, the incidence

angle; e, the emittance angle; and g, the phase angle.

All we need is some cosines of these angles, which are just the dot

products

of the directions:

cos(i) = n-vec ⋅ l-vec (our old friend used for Lambertian

reflectance earlier)

cos(e) = n-vec ⋅ v-vec

cos(g) = v-vec ⋅ l-vec

It turns out the cosine of the angle between the predicted specular

direction (maximum mirror reflection direction) and the viewing direction is

C = 2 cos(i) cos(e) - cos(g).

A common graphics hack just exponentiates this cosine to make

functions that have more or less reflectivity in the "mirror"

(predicted specular) direction:

L(i,e,g) = sCn + (1-s) cos(i); the first term is the mirror-ness

of the surface ('how much' is governed by s, 'how reflective' by n), and

the second term is the Lambertian component. So if albedo is a, the

brightness of that point on the sphere would be a*L(i,e,g). N.B. You should

be careful not to use this formula (or the lambertian one either)

for points that are supposed to be

self-shadowed, that is they are on the "dark side" of the sphere. In

this case cos(i) is negative, and you should set the brightness to

whatever

shadow-brightness value you have chosen (mine is 0.0).

A good way to control a set of actions (pan, tilt, zoom (see below), make image, show image, etc) is a control loop in which your program asks you what you want to do and you supply a character, and there's a case statement for that command that then asks you for parameters, does the action, and loops to the top. That's how we did it in the 60's. Nowadays (and I bet Matlab supports it) there are nice windows with sliders and text fields and radio buttons and such. Do what's best for debugging -- no need to consider this a commercial product.

Mat2Gray and ImShow are both in the image processing toolkit, which may or may not be with the version of Matlab you are using (the Computer Science Department computers have it, ITS may not.) You can get the same effect as ImShow with the ImWrite command, (which has a lot of options and produces graphics files of various sorts) and maybe a shell escape to your favorite graphics-viewing utility on the computer you're using (on Linux mine would be display this year, part of Image Magick suite.)

NOT that you should care, but maybe to demystify; my (handful of) m-files and scripts added up to 4350 bytes and used (pretty much, may have missed one or two) the following functions, constants, declarations, statement types, etc.

functions: *, - , + (matrix, scalar) , / (scalar), :, realpow, max,

norm, sqrt, min, cos, sin, mod, floor, atan2, isnan.

constants: pi, eps, NaN.

global, for, if, zeros,

mat2gray, imshow.

Zoom camera function: nothing simpler: in the initial camera position, just adding some number to the Z-component of each retinal point "zooms out": pushes the image frame away from the focal point, narrows the field of view (thus putting less of the scene onto the image, or magnifying it", reduces the stereo effects, etc. etc. Likewise to zoom you use a smaller f.

Fun albedo functions: come up with interesting functions of sphere coordinates for the albedo, and you may want to use other coordinates to describe "where a point is" on the sphere... like convert from the (x,y,z) representation to a (longitude, latitude) coordinate system or some other one, like an invertable projection onto the plane, as are used for maps. Should be lots of info on web, like spherical projections.

Orthographic projection. With a finite focal length you're creating perspective projection. If the viewpoint moves off to negative infinity, you project all the scene points with parallel rays pointing in the direction of the viewpoint. The difference between perspective and orthographic views of a sphere is very slight (might be fun to do some extreme examples: get the sphere very close to the image array, off axis, and you'll notice something). Perspective makes a more noticeable difference for objects with parallel lines, like cubes. Anyway, with parallel projection you can compute mass properties like volume, centroid, moments, etc. For instance, the approximate volume is the sum over all rays of the length of the ray inside the sphere (the difference between the two points where the ray pierces it) times the area of a pixel (the pixel-spacing squared, probably). Make sense? If you want, just do it.

Multiple mutually-obscuring spheres per image (very easy. if this didn't occur to you already, shame!). (Non-intersecting) spheres are cool in I think a unique way. If you sort them by depth and then paint them into the image back-to-front, you'll get an accurate picture with no mistaken obscurations. Probably unique to spheres (e.g. NOT true for prolate or oblate spheroids or cereal boxes: I bet it also doesn't work for cubes). For general objects and spheres that intersect you need to paint the front-most brightness on a ray-per-ray basis: look up Z-buffering. Not too hard.

Object motion: let the sphere move (say rotate) independently of camera motion. Nothing deep, just co-ordinate hacking. Along with this: make a movie, not stills (matlab can do).

Other objects. Perhaps spheroids: need to be able to compute intersection points (makes finite planar faces a bit of a pain) and normals (sphere's easy, planes are easier...spheroids? Or look up superquadrics.).

Shadows. You can put in a ground plane and a back plane: easy to compute where ray intersects them. Also you can easily cast shadows on them from objects by casting rays from the light source (or from the light source direction if it's at infinity). The ones passing through the object wind up as shadow pixels where they hit the floor or wall.

Spheres casting shadows on other spheres: not hard --- need to treat the light direction as a viewing direction. If a surface point you find by ray-casting onto one surface can't "see" the light source since another object is in the way, it's in shadow. Same math, different semantics.

Close light source --- put light in some 3-D location, not at infinity. Have to re-compute its direction for each surface point, and its brightness varies by the inverse-square law too. Not hard to have a small number of such light sources.

Create faked object textures by lying about the surface normals. Perturb the actual surface normals randomly before computing reflectance to give rough appearance; use a system of regular perturbation 'bumps' to get more manufactured effect. A starting reference: bump mapping.

Texture mapping: It's easy to paint an image on your floor or wall. For more fun, wrap a flat image or pattern around your sphere -- not too obvious how to do this, eh? Need a graphics book or wiki or whatever. Or you can look up some better reflectance functions (there's lots) rather than our unphysical hack. Of course an interesting exercise would be to quantify the differences...

Probably too hard: extended light sources, colors, and mutual illumination.

Matlab!! Matlab has evolved to the point that you don't have to know anything but the name of the problem you're trying to solve. For instance it's trivial to take a photo and wrap it around (project it onto) a sphere and display that. So as of 2009 I'm starting the contest: do the minimal problem in the fewest lines of matlab code. I'm guessing it's a small number of lines. Submit your entry along with the actual assignment.

Last Edition: 6/30/11, last micro-edit 12/3/14