Lambda Calculus 3

RECURSION AND ARITHMETIC

Freebie: More on Evaluation

Goal: Implement Imp. Lang. repetition with Func. Lang. recursion.

Subgoal: Paradoxical Combinator

Subgoal: Implement common mathematical operations.

Warning: Maybe a bit of a slog.

Alternative treatment

of the Paradoxical Combinator.

Read the notes (Chap4.pdf in ``lambda calculus'' content on BB).

RECURSION AND ARITHMETIC

The term redex, short for reducible expression, refers to subterms that can be reduced by one of the reduction rules.

A redex, or reducible expression, is a subexpression of a

λ expression in which a λ can be applied to an

argument. With more than one redex, there is more than one evaluation

order. e.g. (+(* 3 4) (* 7 6)).

Normal Order Evaluation

- Always reduce leftmost redex

- Call by name, lazy evaluation

- Will terminate (find normal form) if possible

Applicative Order Evaluation

- Always reduce leftmost INNERMOST redex.

- Call by value, eager evaluation

- Evaluate actual parameters first: reduce function and argument

before substituting argument into function body.

- May fail to find normal form even if it exists.

E.g.: Normal order:

(λy. λz. z) ((λx.(x x))(λx.(x x))) =>

λz.z

Since y does not appear in the body of the function

(λy. λz. z) (aka 'select-second'). The entire

argument

((λx.(x x))(λx.(x x))) Is copied into formal parameter

y and then thrown away.

E.g.: Applicative order:

(λy. λz. z) ((λx.(x x))(λx.(x x))) =>

(λy. λz. z) ((λx.(x x))(λx.(x x))) =>...

Evaluation of innermost redex ((λx.(x x))(λx.(x

x)))

yields itself and evaluation does not terminate.

More...

Full beta reductions:

Any redex can be reduced at any time. This means essentially the

lack of any particular reduction strategy: with regard to

reducibility, "all bets are off".

Call by name: As normal order, but no reductions are performed inside

abstractions. For example λx.(λx.x)x is in normal form according

to this strategy, although it contains the redex (λx.x)x.

Call by value: Only the outermost redexes are reduced: a redex is

reduced only when its right hand side has reduced to a value

(variable or lambda abstraction).

Call by need:

As in normal

order, but function applications that would duplicate terms

instead name the argument, which is then reduced only "when it is

needed". Called in practical contexts "lazy evaluation". In

implementations this "name" takes the form of a pointer, with the

redex represented by a thunk (code to perform a delayed

computation). Implements a form of "memoization".

Most

programming languages (including Lisp, ML and imperative languages

like C and Java) are described as "strict", meaning that functions

applied to non-normalising arguments are non-normalising. This is

done essentially using applicative order, call by value reduction

(see below), but usually called "eager evaluation".

Applicative order is not a normalising strategy. The usual

counterexample is as follows: define Ω = ωω where ω = λx.xx. This

entire expression contains only one redex, namely the whole

expression; its reduct is again Ω. Since this is the only available

reduction, Ω has no normal form (under any evaluation strategy). Using

applicative order, the expression (select-first(identity(Ω)))

(λx.λy.x) (λx.x)Ω is reduced

by first reducing Ω to normal form (since it is the rightmost redex),

but since Ω has no normal form, applicative order fails to find a

normal form for KIΩ.

In contrast, normal order is so called because it always finds a

normalising reduction, if one exists. In the above example,

(select-first(identity(Ω)))

reduces under normal order to the first argument, identity, which is

a normal form. A drawback is that

redexes in the arguments may be copied, resulting in duplicated

computation (for example, (λx.xx) ((λx.x)y) reduces to ((λx.x)y)

((λx.x)y) using this strategy; now there are two redexes, so full

evaluation needs two more steps, but if the argument had been reduced

first, there would now be none).

REPETITION AND RECURSION

- Bounded repetition carries out something a fixed number of

times, (e.g. for statement.)

- unbounded repetition

does something until some condition is met; don't know in

advance how many

iterations that might take (need for unknown lengths, nesting...).

- ILs usually use for bounded,

while, repeat for unbounded.

- ILs usually allow recursion too, of course.

- FLs use nested function calls (recursion).

Repetition involves deciding

whether or not to do another layer of function call nesting.

Repetition function is defined in terms of itself, with a stopping

condition (base case) that checks for end of nested or linear object

sequence, say.

- primitive recursion implements bounded repetition: the number

of repetitions is known in advance. In general recursion

it's not.

RECURSION THROUGH DEFINITIONS -- OOPS!

We can't quite define recursion with

mechanisms so far.

But why not just use a name from

the left side of

definition on its right side?

def add x y =

if iszero y

then x

else add (succ x) (pred y)

Here we are hoping for (and in fact we'll soon make) something like:

add one two => ... =>

add (succ one) (pred two) => ... =>

add (succ (succ (one)) (pred (pred two)) => ... =>

(succ (succ one)) ==

three

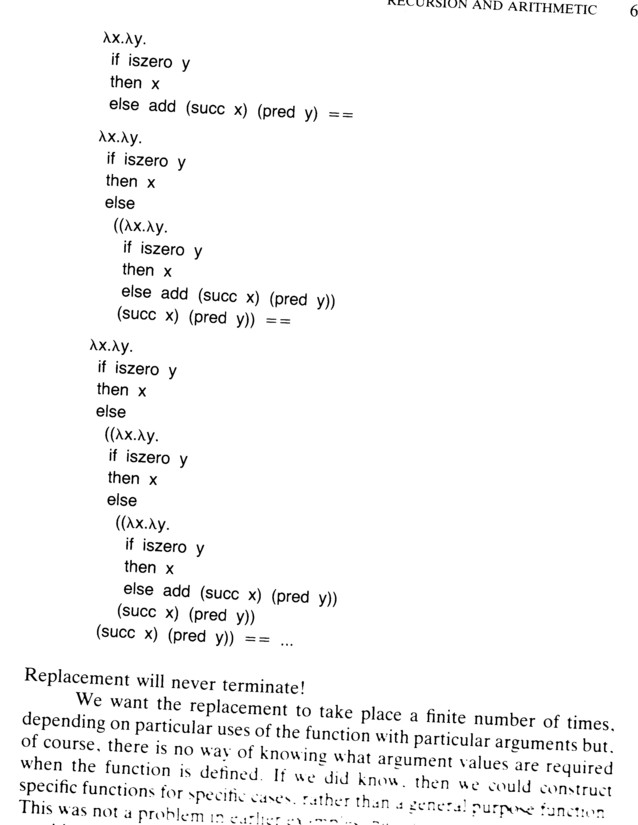

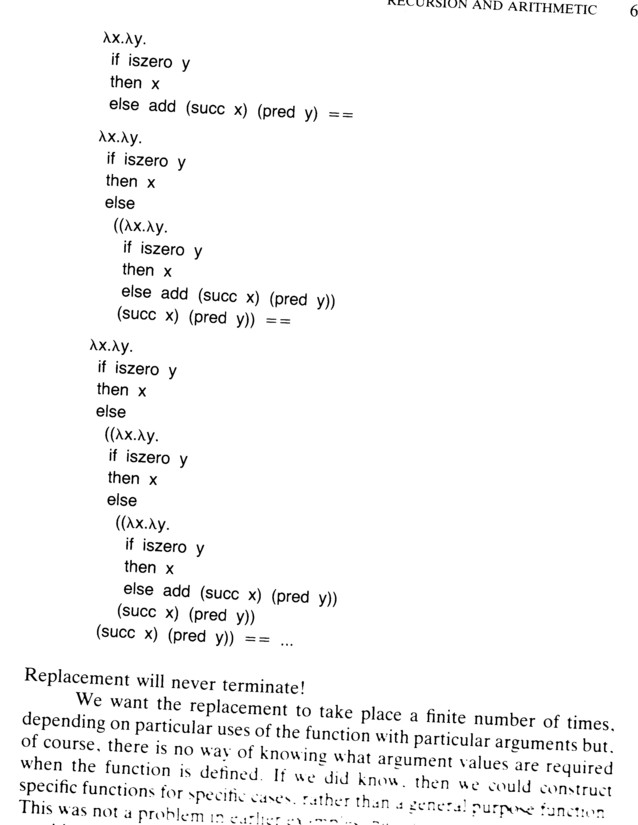

BUT... INFINITE RECURSION!

In Chapter 2 we required that all names in expressions be

replaced by their definitions before the expression is evaluated.

In our simple attempt above, replacement never terminates.

Problem: need

recursion termination that depends on particular function arguments,

which we don't know at definition time (``compile time''). e.g.

stop calling yourself for the base case

( n = 0 in n!, say).

Must find a way to delay the repetitive use of

the function until it is actually required (at ``run time'').

Recall our attempt:

def add x y =

if iszero y

then x

else add (succ x) (pred y)

But we can't evaluate it... it takes off and never comes back.

Note here how we are using our shiny new syntax with ==. From now on

we can pick the syntactic level that illustrates our issues, and not

descend to λs and ()s unless it serves a purpose

.

PASSING A FUNCTION TO ITSELF

Function use always happens in an application.

It may be delayed by abstraction at the point where the function

is used.

That is,

< function > < argument >

is equivalent to

λ f. (f < argument >) < function >,

The original

function becomes the argument in a new application.

In our

addition example can introduce a new argument to remove

(by abstraction) the recursion that's causing us trouble.

def add1 f x y =

if iszero y

then x

else f (succ x) (pred y)

PASSING A FUNCTION TO ITSELF, BAD TRY

Our current effort, which takes three arguments:

def add1 f x y =

if iszero y

then x

else f (succ x) (pred y)

Need to find argument for add1 that has the same

effect as add. Can't just pass add to

add1; gives non-terminating replacement

again. Also can't pass add1 in to itself--

def add = add1 add1

yields

(λ f. λ x. λ y.

if iszero y

then x

else f (succ x) (pred y)) add1 =>

λ x. λ y.

if iszero y

then x

else add1 (succ x) (pred y)

oops....

in the last line add1 only has two arguments, not the

three it needs.

PASSING FN TO SELF, BETTER IDEA

In definition of add1 the application

f (succ x) (pred y)

has only two arguments, and so substituting add1 for

f leaves the application without the argument

corresponding to bound variable f in add1's

definition: we need three arguments, in short, not two.

What we really want to wind up with is

add1 add1 (succ x) (pred y),

so add1 may be passed on to later recursions.

So let us define an add2, this time passing (that is, copying) the argument

f in as an argument of f itself.

def add2 f x y =

if iszero y

then x

else f f (succ x) (pred y)

where as before

def add = add2 add2

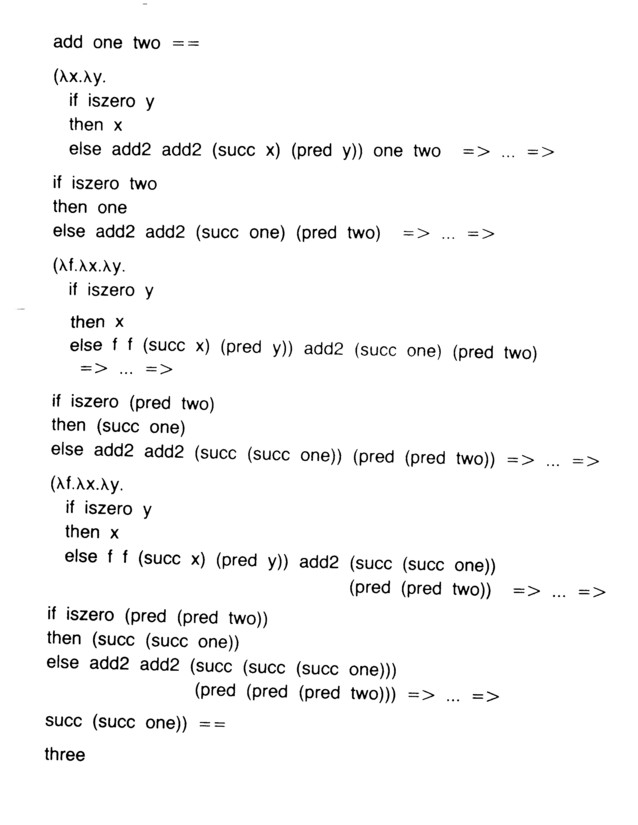

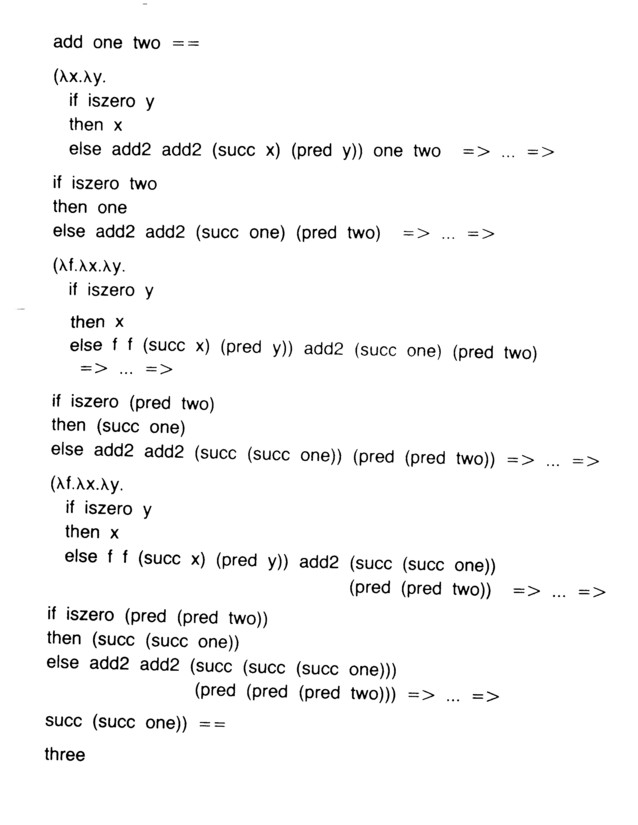

PASSING FN TO SELF, BETTER IDEA CONT.

Now the definition expands and evaluates as

(λ f.λ x.λ y.

if iszero y

then x

else f f (succ x) (pred y)) add2 =>

λ x.λ y.

if iszero y

then x

else add2 add2 (succ x) (pred y)

Trick: use two copies of add2, one as function and one as

argument, to allow the recursion to continue: when the recursion point

is reached another copy of the whole function is passed down.

Recall our current attempt:

def add2 f x y =

if iszero y

then x

else f f (succ x) (pred y)

where as before

def add = add2 add2

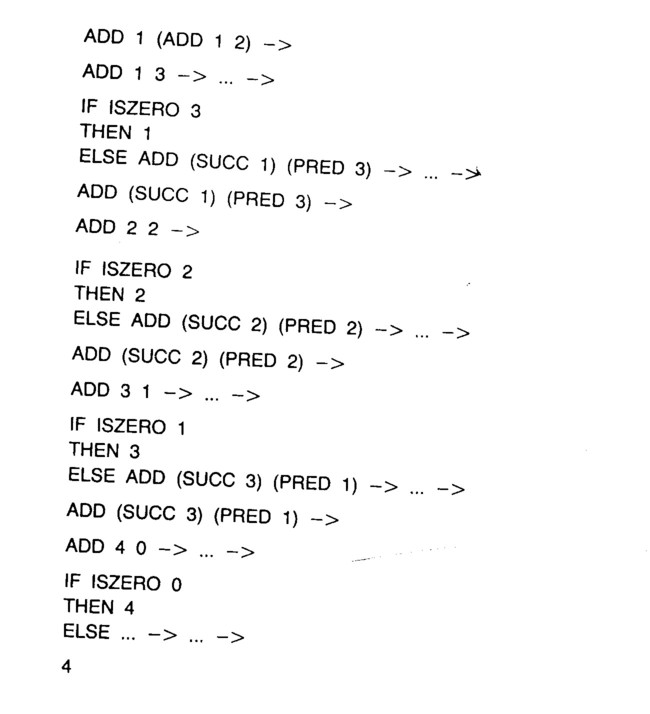

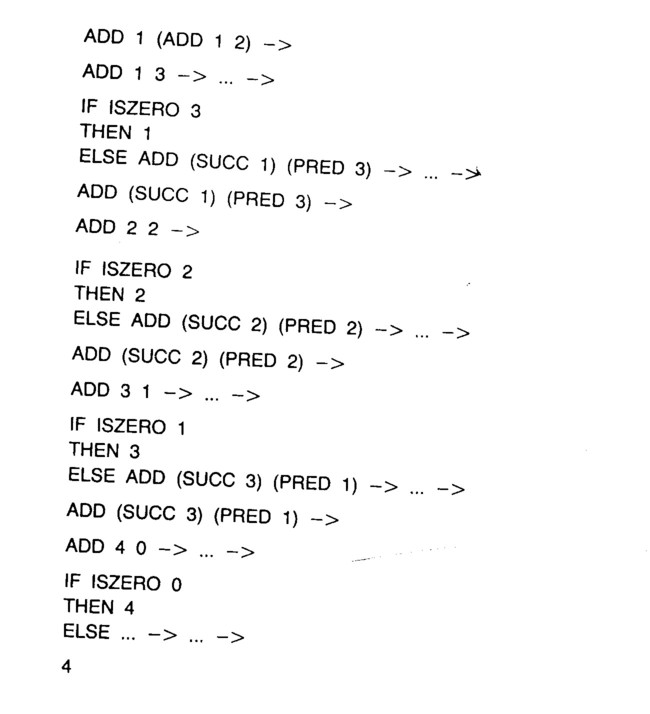

APPLICATIVE ORDER REDUCTION

From now on we occasionally evaluate expressions

partially in applicative order: that is,

evaluate arguments before passing them to functions.

Notate as

->, or

-> ... -> for a sequence.

This scheme is very natural for arithmetic: compute sub-expressions

and combine: (* (+ 2 3) (- 4 1)).

If they terminate,

applicative and normal order reductions yield the same result. BUT there

are expressions whose evaluation terminates under normal order but does

not under applicative order.

Conditional

expressions are one of the problems, and that strict applicative order

reduction of conditional expressions using recursive calls in a

function body doesn't terminate.

The difficulty is easy to see: an if-then-else is implemented

by a cond function, which takes three arguments and

can be read as a "then, else, if" function, you recall.

In applicative evaluation order, that means we'd evaluate

the computation for the "then" and "else" cases, both,

before even looking at the "if" condition! NOT what an

if-then-else is supposed to do, right? Best case it's

crazy, worst case, with recursion, it doesn't terminate. See the

"Evaluation" chapter in the readings for more.

Thus until Chapter 8, be advised that

both applicative order indicators -> and -> ... ->

still imply normal order reduction of conditional expressions.

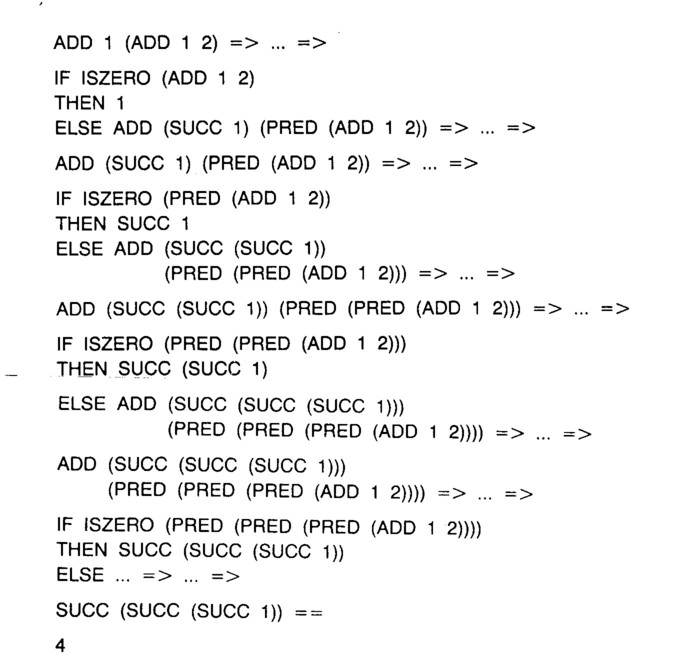

COMPARE APPLICATIVE TO NORMAL

Applicative:

Normal:

Skip down to Application and Self-Replication

THE RECURSION FUNCTION

We crafted a special-case recursive

function for addition.

Want a constructor that does that job:

build a recursive function from a

non-recursive one, with a single abstraction at the recursion point.

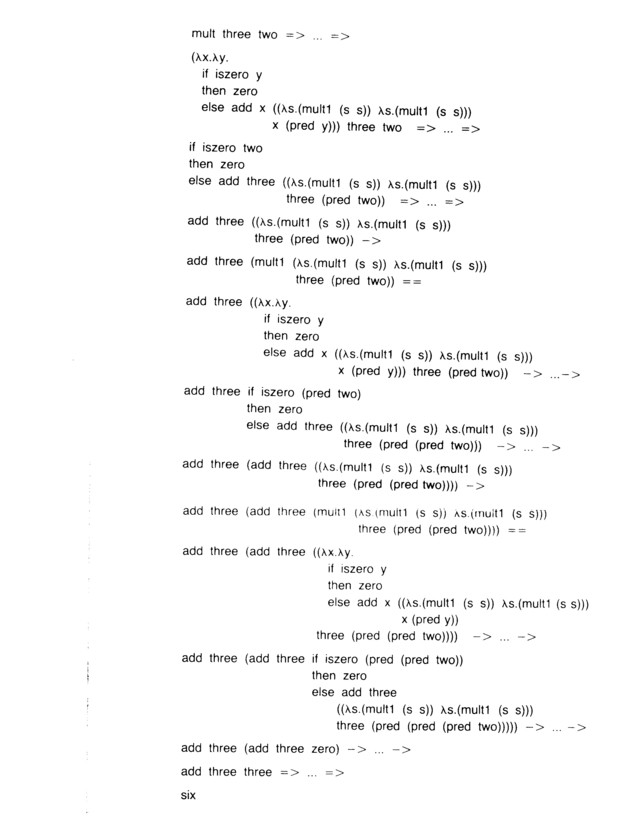

Second example, multiplication: analogous to addition --

to multiply two numbers, add the first to the product of

the first and the decremented second. If the second is zero, so is

the product.

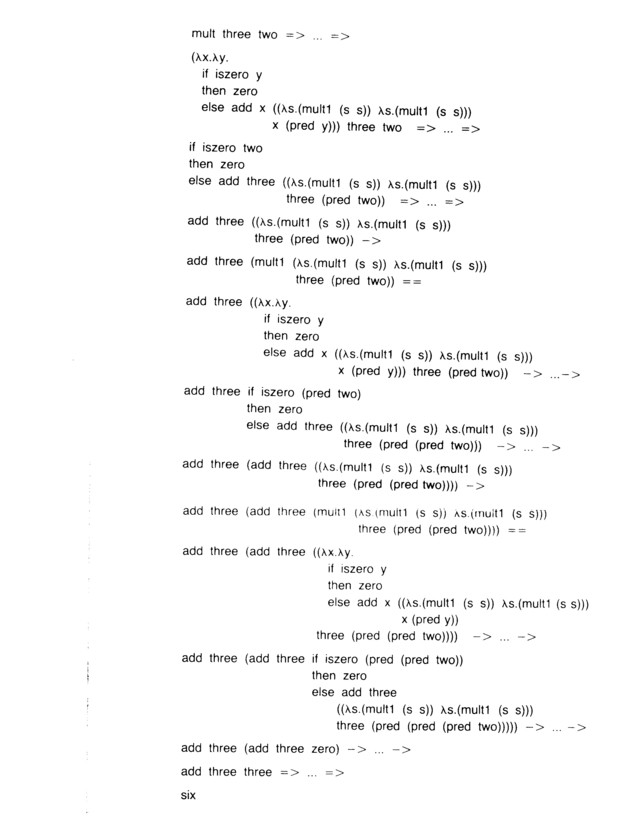

def mult x y =

if iszero y

then zero

else add x (mult x (pred y))

The last line is the infinite recursion it's our goal to fix.

What we want to happen (note applicative order reductions):

mult three two => ... =>

add three (mult three (pred two)) -> ... ->

add three ( add three

( mult three (pred (pred two)))) -> ... ->

add three (add three zero) -> ... ->

add three three => ... =>

six

SEEKING ``RECURSIVE''

Again, can remove mult's self-reference by abstraction at the recursion

point in a new function mult1:

def mult1 f x y =

if iszero y

then zero

else add x (f x (pred y))

But what is that f going to be? Not mult or

mult1,

we've been there already.

We want a function recursive to construct

recursive functions from non-recursive versions:

def mult = recursive mult1

We know recursive must not only pass a copy of its

argument to that argument, but also ensure that self-application will

continue: the copying mechanism must also be passed on. This suggests

a recursive of the form

def recursive f = f <'f' and copy> ,

and we'll leave the argument-copying unspecified for now.

STILL SEEKING ``RECURSIVE''

Try

def recursive f = f <'f' and copy>

on mult1:

recursive mult1 ==

(λ f. (f <'f' and copy>) mult1) =>

mult1 <'mult1' and copy> ==

(λ f. λ x. λ y.

if iszero y

then zero

else add x (f x (pred y))) <'mult1' and copy> =>

λ x. λ y.

if iszero y

then zero

else add x (<'mult1' and copy> x (pred y))

In the body we have

<'mult1' and copy> x (pred y)

but we actually require:

mult1 <'mult1' and copy> x (pred y)

to assure <'mult1' and copy> is passed on again through

mult1's bound variable f to the next level of

recursion.

STILL SEEKING ``RECURSIVE''

That in turn means the copy mechanism must be such that:

<'mult1' and copy>

=> ... =>

mult1 <'mult1' and copy>

In general, from function f passed to recursive,

need:

<'f' and copy> => ... => f <'f' and copy>.

Thus the copy mechanism must be an application, and that

application must be self-replicating.

But hey! Recall

that the self-application function

λ s. (s s)

self-replicates when applied to itself!

But darn! Recall that then

the self-replication never ends.

But yay!

Self-application may be delayed through abstraction,

namely with the construction of a new function:

λ f. λ s. (f (s s)).

In other words, that delayed self-application only happens when the

new function is applied!. Just what we want for "recurse

when you're called and shut up meanwhile".

EUREKA! RECURSIVE FOUND!

Here's our abstracted self-replication function:

λ f. λ s. (f (s s)).

Here, the self-application (s s) becomes an argument for

f. And this f might be a function with a

conditional in its body, which only leads to the evaluation of its

argument when some condition is met.

Applying to arbitrary function:

λ f. λ s. (f (s s)) < function > =>

λ s. (< function > (s s))

And applying to itself:

λ s. ( (s s)) λ s. ( (s s)) =>

(λ s. ( (s s)) λ s. ( (s s)))

Sure enough, it self-replicates just once.

"Well, we knocked the bastard off." (E.H. 1953)

RECURSIVE

def recursive

f = λ s. (f (s s)) λ s. (f (s s))

E.g.

def mult = recursive mult1

gives:

CB to self: this should be pointed up, deleted, made plain...

(λ f. (λ s. (f (s s))

λ s. (f (s s))) mult1) =>

λ s. (mult1 (s s))

λ s. (mult1 (s s)) =>

mult1 (λ s. (mult1 (s s))

λ s. mult1 (s s))) ==

(λ f. λ x. λ y.

if iszero y

then zero

else add x (f x (pred y)))

(λ s. (mult1 (s s))

λ s. (mult1 (s s))) =>

λ x. λ y.

if iszero y

then zero

else add x (( λ s. (mult1 (s s))

λ s. (mult1 (s s))) x (pred y))

Don't need to replace other mult1's since its def. doesn't

ref. itself.

ANOTHER APPROACH TO Y

Consider factorial: we'd LIKE to write:

fac = λ n.

if iszero n

then 1

else n * fac(n-1)

Notice in the last line fac is a reference to the name on the

left: it's treated as a GLOBAL name! Those don't exist in λ

calculus,

though :-{. Further, as we've seen this attempt can't be evaluated

since it expands the "same name" forever.

To avoid the global name and avoid infinite expansion of the

same name, send in the function as an argument.

fac = Y λ fac. λ n. % notice the Y!

if iszero n

then 1

else n * fac(n-1)

In this last line, fac is a variable, not a global name (we

could call it myfact or f.)

Y EXAMPLE

Recall

Y(f) = f (

Y(

f))

And recall our last definition of factorial, with f used for

the fac in the last line. That is:

fac(3) = (Y λ f. λ n.

if (n=0) then 1

else n*f(n-1)) (3) ==

(λ n.

if (n=0) then 1

else n*

(( Y λ f. λ n.

if (n=0) then 1

else n*f(n-1))

(n-1))) (3) =>

if (3 = 0) then 1

else 3* ((Y λ f. λ n.

if (n=0) then 1

else n*(f(n-1)))(3-1)

== % (since 3 ≠ 0)

3* ((Y λ f. λ n.

if (n=0) then 1

else n*(f(n-1)))(2)

Which is obviously 3 * fac(2).

ABSTRACTION and SELF-REPLICATION

A rather rabbit-from-hat approach to Y. This is not a self-standing

treatment: see the link below for that. This is a preview or abstract.

OUR GOAL: To create a function rec

that itself creates a "recursive

ready function" from its (function) argument, as in:

rec < name > = < expression > .

Recall for recursion we want to write something like

def add x y =

if iszero y

then x

else add (succ x) (pred y)

This definition itself does not terminate! Expands through space...

Abstraction (wrapping in a function) prevents a function's (like

add)'s

evaluation:

(f [args]) % f gets evaluated

λ g. (g [args]) % stable. Call with f

We'll try to fix our problem with add by using this 'abstraction

trick'.

ABSTRACTION.... BUT!!

The abstraction trick creates

a need for a self-replication function.

Recall that in our add1

example, where we want to define add as (add1 add1),

our problem is that single f in

def add1 f x y =

if iszero y

then x

else f (succ x) (pred y)

which means f won't work if it expects three arguments. We know that

the following version works, but we'd need to customize (note the two

f's) each function

we want to make recursive.

def add2 f x y =

if iszero y

then x

else f f (succ x) (pred y)

with

def add = add2 add2

So to save add1 we need some way to achieve the effect of replicating

f without getting into trouble. It would be ideal if

f

would replicate

itself just once and then only when it is invoked, thus creating its

own first argument.

We think

of the self-application function

selfapply:

def selfapply = λ s. (s s)

However, self-apply is not self-replicating:

(selfapply selfapply)

runs forever, not expanding in space but calling and copying the

application itself forever through time.

Abstraction to the rescue again!

def recursive f =

(λ s. (f (s s)) λ s. (f (s s)))

keeps the self-application from running unless called, and when it is

it runs just once.

So e.g. we'd write:

def add = recursive add1

recursive AT WORK

Call recursive "Y" for short.

def Y = λ f.

(λ s. (f (s s))

λ s. (f (s s)))

(Y < fun >) == (λ f.

(λ s. (f (s s))

λ s. (f (s s))) < fun >) =>

(λ s. ( < fun > ( s s ))

λ s. (< fun > (s s))) =>

< fun > λ s. (< fun > (s s))

λ s. (< fun > (s s)) =

< fun > (Y < fun >)

Ta-da!

Here is a Y tutorial that may

be useful: it justifies Y in this tops-down style, which notices

the cooperation between abstraction (making a function from an

expression

to stop it's being evaluated) and self-replication, which we've seen

we need for recursion.

Y, THE PARADOXICAL COMBINATOR

Our recursive is also known as

a paradoxical combinator, a

fixed-point finder, or

Y for short. It was found by Haskall Curry.

A fixed point of a function stays the same when the function

is applied to it:

if f = xn, n > 0 , then x = 0

and x = 1 are fixed points.

If we talk of applying

functions

to functions, then we want

f(p) =p , where

p =g(f) , or equivalently,

g(f) = f(g(f)) .

As it happens, there are infinitely many such

"fixed point operators" and I think hunting them is a reasonable

activity for recursive function theorists (but I don't know).

Thus our Y (and often any fixed point combinator is called

Y)

is mathematically defined by an elegant and

easy-to-remember formula that possibly has been obscured by

our work so far:

Y(f) = f (Y(f))

This is recursive, only in math. Also, you can see why

applying Y several times leads to nontrivial recursion:

..... f(f(f...f(Y(f))))

Compare:

recursive f =

λ s. (f (s s )) λ s. (f (s s )) =>

f (λ s. (f (s s )) λ s. (f (s s )) ==

f (recursive f)

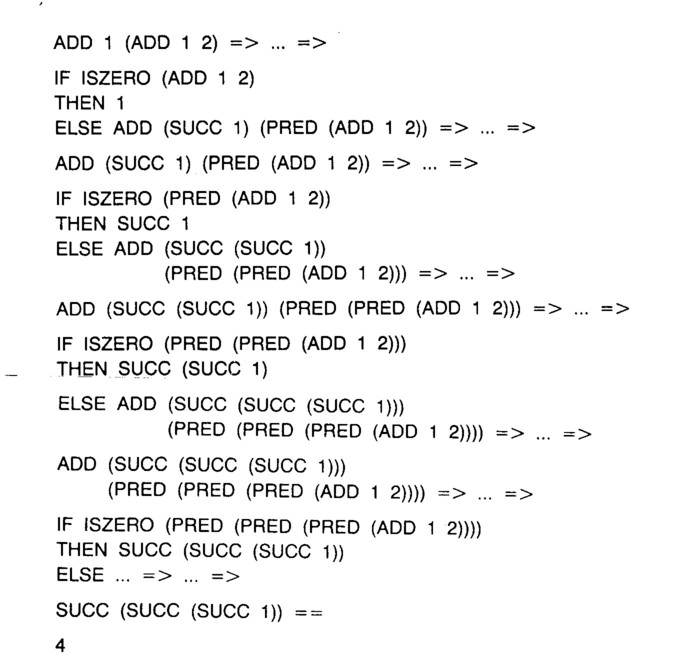

Below, we see recursive mult1, or Y(mult1), in line 11. It generates the

mult1 Y(mult1) in line 13, which is what we pay Y

to do. You can follow these patterns

through

the evaluation:

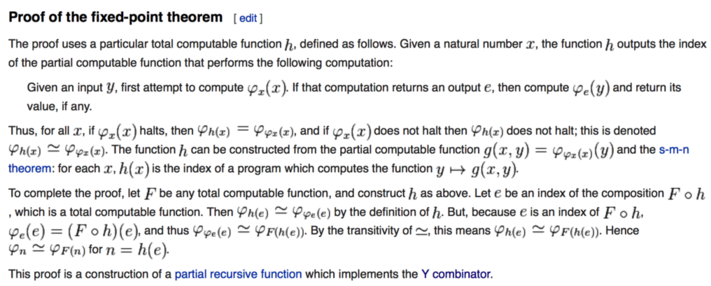

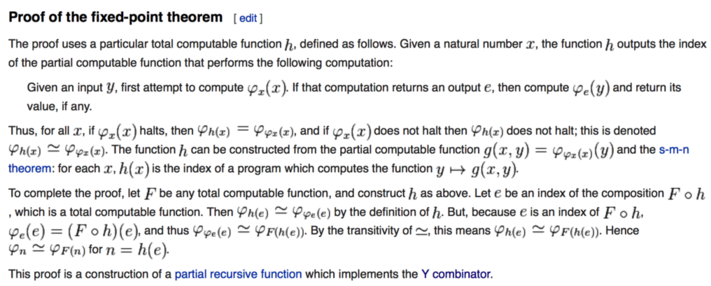

Y and the Recursion Theorem

The recursion theorem is a basic result by Kleene (of the Kleene *). You

may run into it in CS Theory courses.

Check out

Wikipedia , where we find the relation between the recursion (and fixed-point) theorems to Y:

Here are some

lecture notes on the recursion theorem,

courtesy of J. Seiferas

CLEANING UP

We can now simplify notation and sugar away the auxiliary function. Use new

form:

rec < name > = < expression >

This rec name indicates that the occurrence of the

name in the definition should be replaced using abstraction, and then

that the paradoxical combinator should be applied to the whole of the

defining expression. E.g.

rec add x y =

if iszero y

then x

else add (succ x) (pred y)

for

def add1 f x y =

if iszero y

then x

else f(succ x) (pred y)

and

def add = recursive add1

ARITHMETIC OPERATIONS

Raising to a Power:

to exponentiate (compute xy) we multiply

x by ``x to the power of the decremented y'', and if y is zero the

power is one.

rec power x y =

if iszero y

then one

else mult x (power x (pred y))

EXPONENTIATION EXAMPLE

E.g.

power two three => .... =>

mult two

(power two (pred three)) -> ... ->

mult two

(mult two

(power two (pred (pred three)))) -> ... ->

mult two

(mult two

(mult two

(power two (pred (pred (pred three))))))

-> ... ->

mult two

(mult two

(mult two one)) -> ... ->

mult two

(mult two two) -> ... ->

mult two four => ... =>

eight

SUBTRACTION

The difference of two numbers is the difference after decrementing

both, with difference of a number and zero being the number. So...

rec sub x y =

if iszero y

then x

else sub (pred x) (pred y)

E.g.

sub four two => .... =>

sub (pred four) (pred two) => .... =>

sub (pred (pred four)) pred( (pred two))

=> .... =>

(pred (pred four)) => .... =>

two

An "undocumented feature" (i.e. bug): if y > x it returns zero.

DIVISION

Problem: Divide by Zero...so far can't deal with

undefined values. Hack: let division by zero be zero and

remember to check for a zero divisor.

For non-zero divisor, count

how often it can be subtracted from the dividend until the dividend is

smaller than the divisor.

rec div1 x y =

if greater y x

then zero

else succ (div1 (sub x y) y)

def div x y =

if iszero y

then zero

else div1 x y

ARITHMETIC COMPARISON

Start with Absolute Difference:

def abs-diff x y =

add (sub x y) (sub y x)

def equal x y =

iszero(abs-diff x y)

...

def greater x y =

not(iszero (sub x y))

def greater-or-equal x y =

iszero (sub y x)

SUMMARY

- Recursion for repetition in FLs. But recursion through

function definitions leads to non-terminating substitution sequences.

- Recursion my be enabled by abstracting at the place where recursion

takes place in a function and then passing the function to itself.

- Evaluation may be simplified with applicative-order β

reduction.

- Recursion may be generalized through a recursion function that

substitutes a function at its own recursion points.

- A new notation simplifies definition of recursive functions.

- Recursion can be used to build standard arithmetic operations.