Greedy Algorithms

Weiss Ch. 10.1.

Greedy algorithms "do the best thing" on the basis of local

information, not search. e.g. Gradient ascent (mtn climbing in fog).

Sometimes they work and sometimes not. Always works (finds "global

maximum (or minimum)") on unimodal

functions. Can get stuck in "local maxima (minima)" or "local

optima".

Hence lots of

books, papers, careers in search: genetic algorithms , simulated

annealing, etc. etc. (AI).

Scheduling Drive-by

How schedule N jobs (no preemptive dynamic rescheduling) of various

lengths so as to optimize the "happiness function"

smallest-average-time-to-completion?

Pretty obvious: shortest job first etc. (else just switch

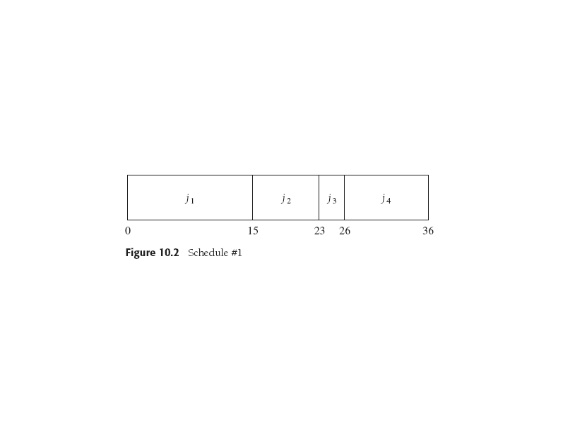

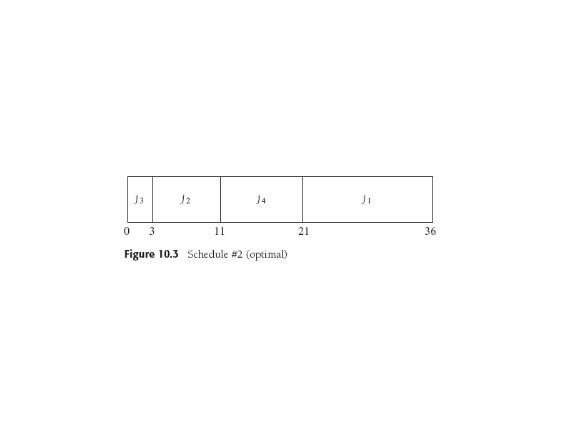

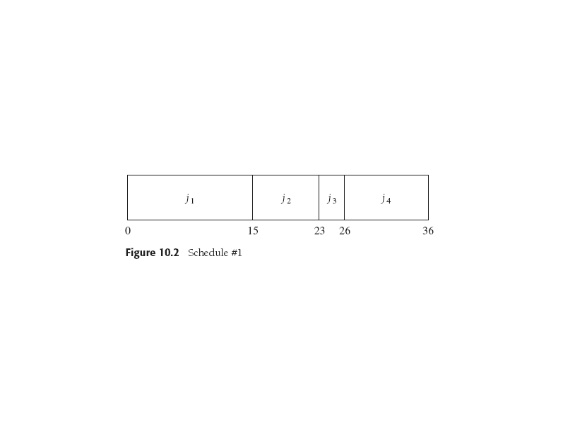

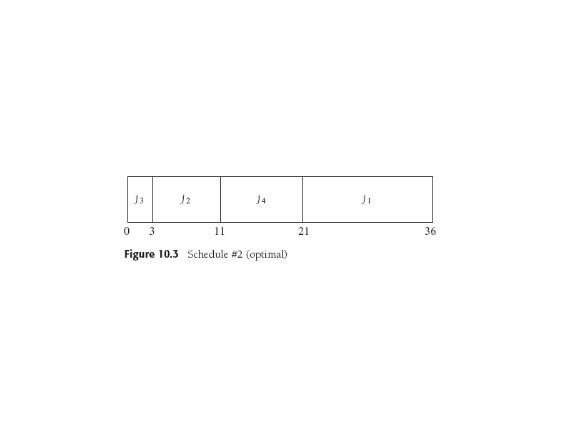

order!). Here's a pessimal-looking order followed by the optimal one:

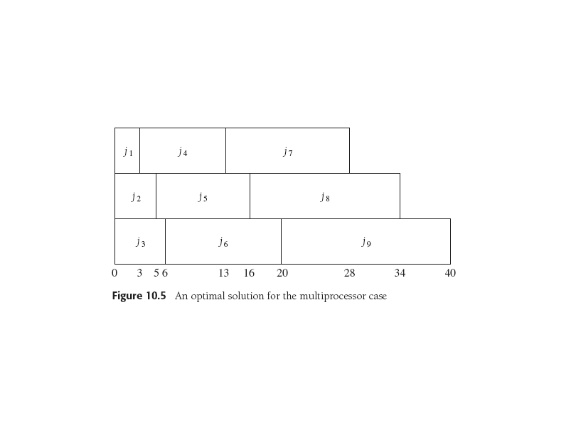

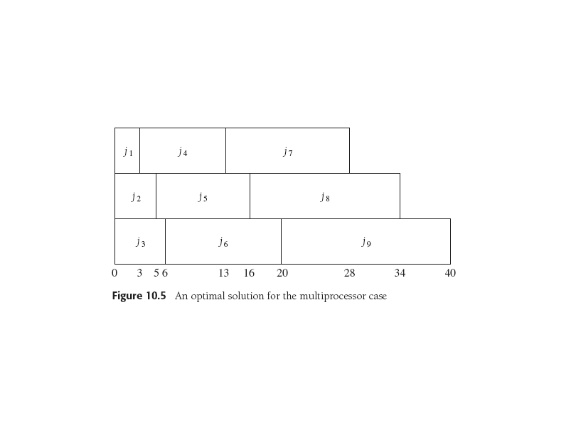

Multiprocessor version: round robin version of that.

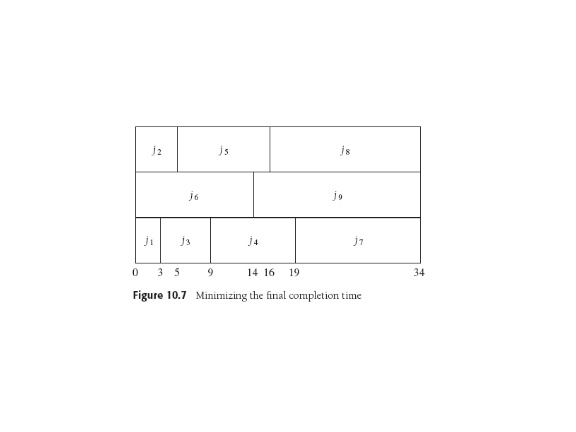

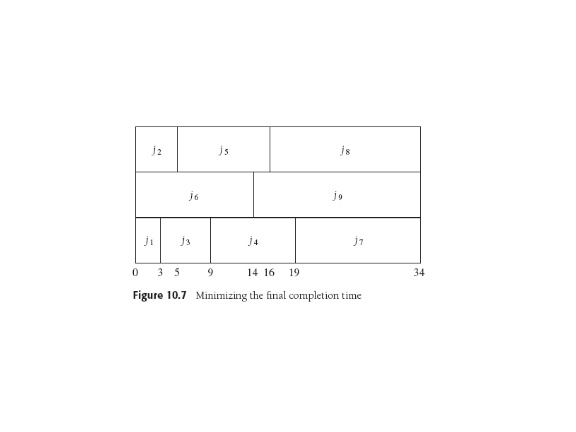

Minimizing final completion time on N processors (e.g. all quittin at

once is good!). Reducible (equivalent given polynomial work) to knapsack,

bin-packing so is NP-complete. Sorry.

Approximate Bin Packing Fly-by

Moral: greedy methods can often get you at least up into the foothills,

close to the global optimum, decreasing your optimization time using more

sophisticated methods.

Bin Packing: N items of size ∈ (0,1]. Pack into fewest number

of bins, each with unit capacity. On-line -- deal with items as

they appear. Off-line -- get all items first, think, then deal.

In any case, bin-packing is NP Complete, hence the "Approximate" in

section

title.

Weiss does cute proofs and finds properties of several

On line algorithms.

First, can prove more or less easily (p. 440) some inputs make any

online

algorithm use 4/3 of optimal bins. Some methods:

Next fit: if next item fits in latest bin

do it else start new bin.

First fit: Scan bins in order, put new item in first bin big enough.

Best fit: item goes in

tightest fitting existing bin.

Off line algorithms. Pick large items first, giving

Best fit decreasing, First fit decreasing. But

there are annoying sets of bin sizes that defeat these (or any)

strategy. Weiss shows some of these nasty answers to proposed

algorithms, along with proofs of how well algorithms can perform with

respect to the optimal solution. Some

fairly hairy-looking proofs of how close to optimal one can get.

Huffman Coding

Morse Code: why does . mean E and - mean T while C is -.-. and

Q is --.-?

Answer: Shannon coding theorem, information theory, coding theory:

texts,

papers, courses, majors, scholarship, IMMENSE technological progress and coolness.

DHL? WTF? I remember 300-baud (NOT a misprint) modems! Don't forget

turbo-codes.

Of course: send common symbols quickly (si, no, eat, tax, die)

and less common ones not-so-quickly

Hippopotomonstrosesquipedaliophobia.

Now if you use fewer bits to represent common strings you could say

you'd

compressed a file of text -- schemes like zip, gzip, etc. are on-line

algorithms that are very clever and work differently. Take WinZip,

for instance: it compresses DOC(X) and XLS(X) by about 88%,

.JPEG by 16%, and .MP3 by 1%.

Huffman coding seems to promise 25 -- 60%.

Codes

Represent letters (say) by bit strings, no spaces.

Make sure the string is unambiguous, can be *decoded* on-line. Morse

code's not, needs spaces: . . is ee, .. is i. Note we're not saying

the

text can be *encoded* on-line. Huffman codeing gathers a text's

statistics, THEN creates a code tailored to it.

For on-line decoding, can have all chars use same number of bits, as

in ASCII.

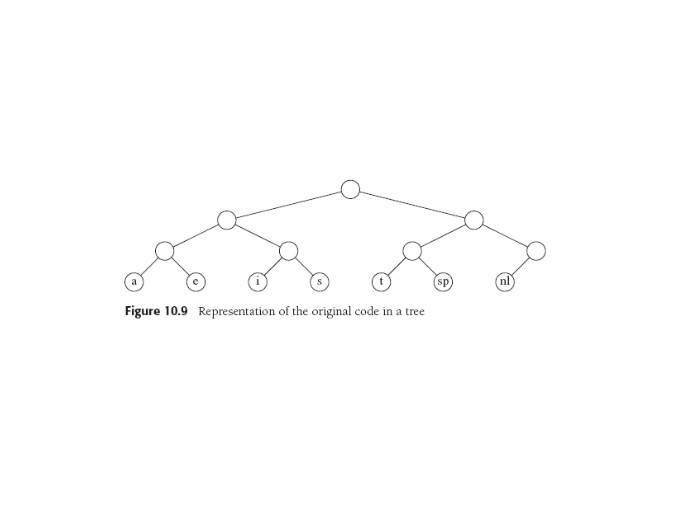

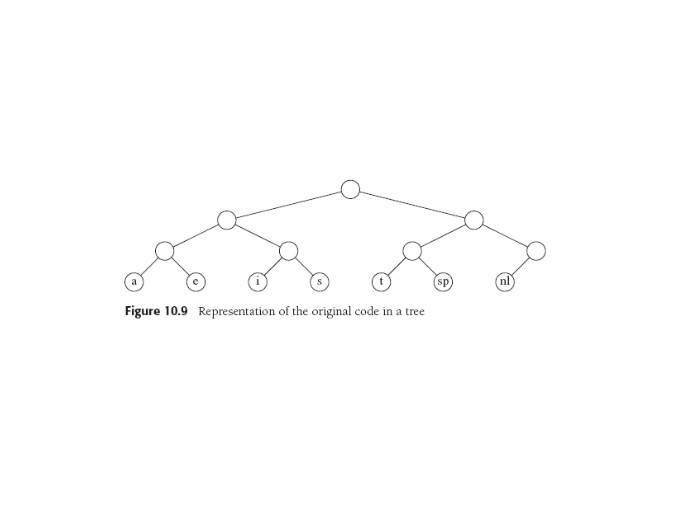

It's useful to put our codes into trees, e.g.

The above tree has edges labeled 0 (left) and 1 (right), and

data only at the leaves. This data structure is a trie and

is used e.g. in compilers for storing variables under their names

(branching factor > 26).

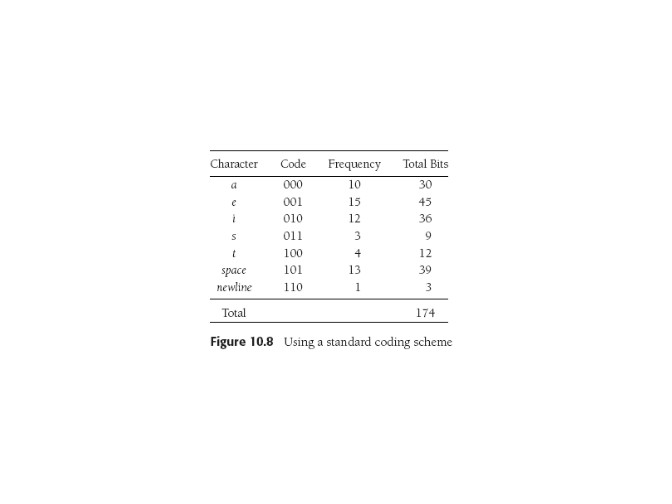

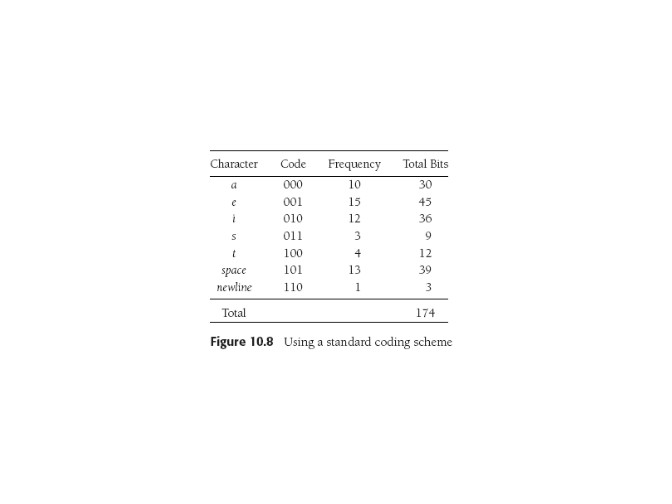

Let's say the cost of a character in this particular text is its

frequency f times its depth d (this is the number of edge-follows we'll

need to decode it every time it appears. Then the total cost of the

text under that code is ∑ (fi di).

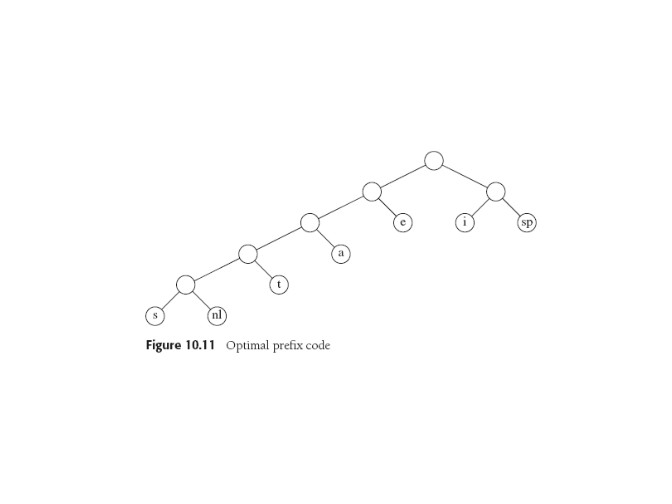

Notice by inspection that the "nl" character can just be moved up to the

node above it: it becomes a 2-bit character and the text becomes

cheaper to code. But does that still work?

Yes! With chars only at the leaves, it's a full tree: all

nodes are leaves or have two children.

No code (edge labels on path to

root) is a subset of any other, so always right to take the first

leaf you find: no more below! Resulting code is a prefix code.

Huffman

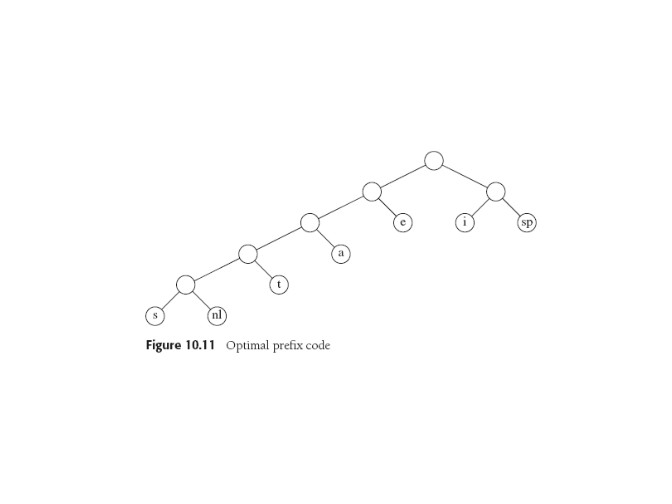

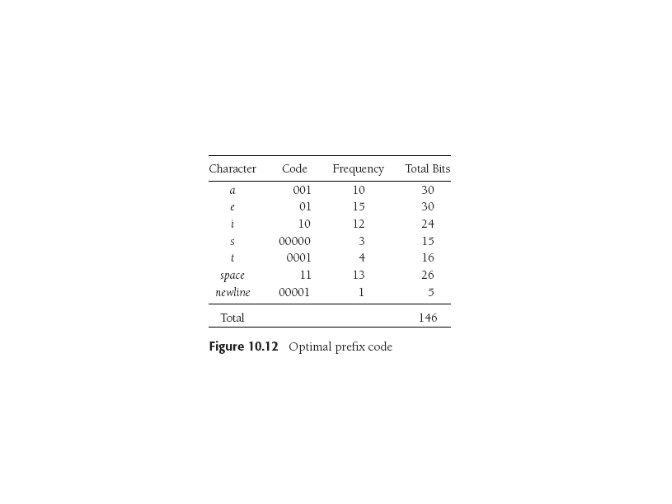

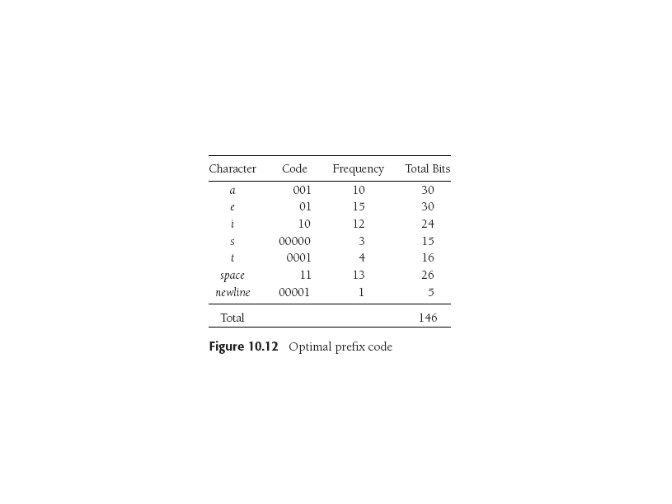

We want a full binary tree of minimum total cost where all characters

are in the leaves. For this example that's

as explicated here:

Code won't be unique (for one thing, swap any children in the tree!).

Huffman's Algorithm:

For C characters: maintain a forest of trees. A tree's weight is the

sum of the frequencies of its leaves.

Repeat C-1 times: select two trees R

and S of

smallest weight (break ties however) make new tree R with subtrees R

and S. (dass it...simple, eh?)

That process transforms a forest of C single-node trees into

a single Huffman-code tree.

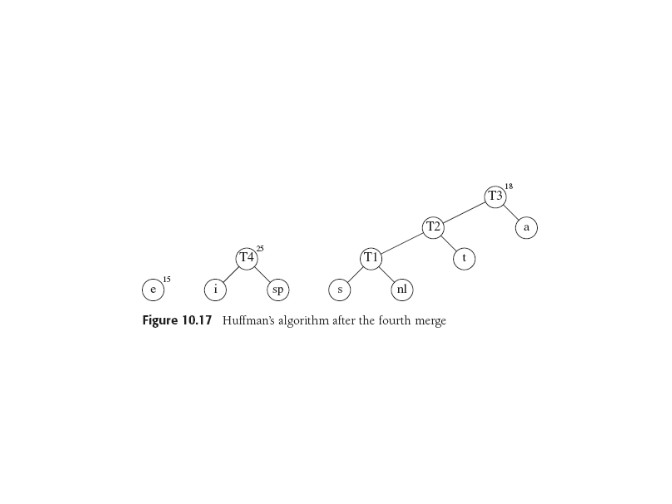

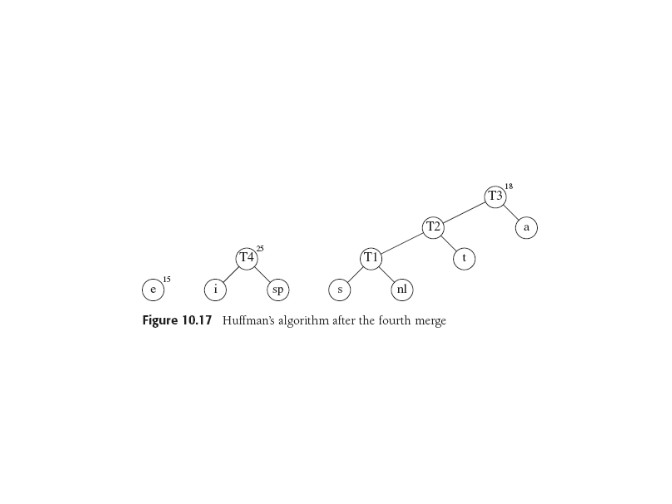

Starting with our 7 character nodes a, e, i, ...

with frequencies 10, 15, 12,..., we get this after four merges:

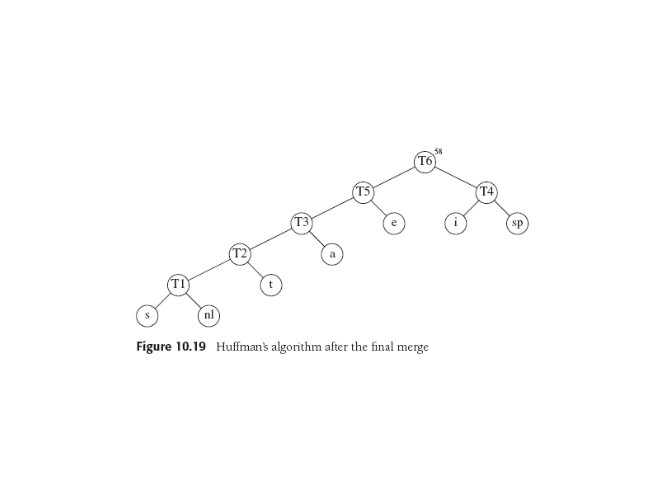

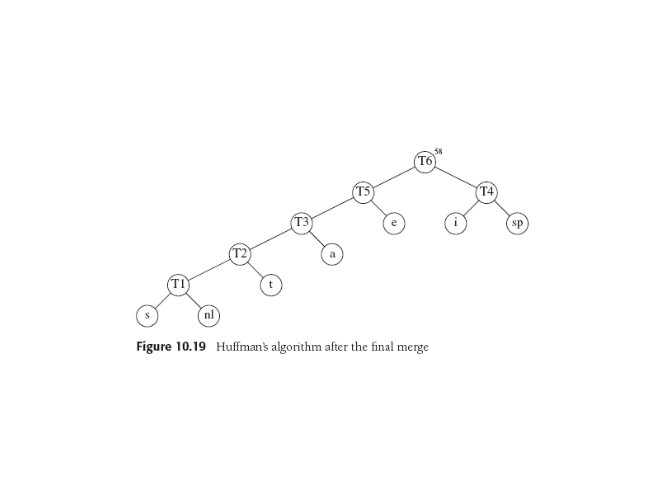

and this at the end:

Prove It!

We (and Weiss) won't prove Huffman code is optimal. I found Weiss and

a couple of other proofs a little puzzling, seeming to start off assuming

optimality. But Weiss's steps are quite like several proofs out

there, and the use of induction seems very natural.

This

proof uses no induction, but detailed reasoning about weights:

it seems a little Euro to me. Its plan:

- Show that this problem satisfies the greedy choice property,

that is, if a greedy choice is made by Huffman's algorithm,

an optimal solution remains possible.

- Show that this problem has an optimal substructure

property, that is, an optimal solution to Huffman's algorithm

contains optimal solutions to subproblems.

- Conclude correctness of Huffman's algorithm using step 1 and step 2.

Proving optimality of

Huffman codes seems to

have

attracted attempts at automation.

Analysis

Obvious idea is maintain trees in priority queue by weight, so get

O(C logC): a build_heap, 2C-2 deleteMins, C-2 inserts. p-queue never

has > C entries.

Note that it's greedy only after the first pass thru text to get the

frequencies. See exercises.

Last update: 7.24.13