Dynamic Programming

Weiss Ch. 10.3.

DP is a common competitor to recursive D&C -- DP's job is to

implement recursive problem statements more efficiently by keeping

and referring to past results (not calling self to re-compute them).

Often DP is used for optimization

(say controlling a missle along a desired trajectory, simulating

protein-folding, or to compute

the best match between sequences like genes, pixel-values,

phonemes...)

The trick is

to keep segments of solution that are already proven best and use them

to create larger segments.

A simple convincing DP

example is computing Fibonacci. If the recursive

definition

F(N) = F(N-1)+ F(N-2) is implemented literally with 2 base cases

and 2 recursive calls, it is a computational disaster.

Running time grows as fast as the Fib. sequence itself, or

exponentially.

The problem is that in computing F(N) we can see just from the

definition

that F(N-1) must be computed twice, and that implies an exponential number

of repeat computations for F(N-k).

But the formula also can be read "To compute the next F(N), take the

two you've most recently done (including base cases) and add them up."

That is, remember the last two results for use this time. Updating

that two-number table (DP-speak) is O(1), so get O(N) scheme for Fib(N).

The idea of a subroutine remembering formerly-computed answers is

called

memoizing and some languages allow designating any function a

"memo function".

Another Example

The last several years, a popular assignment or lab or exam question

has been of the form "Compute this recurrence using a table, not

recursion."

This gets at the DP idea and may be inspired by Weiss p. 463-5, which

gives a literal-minded recursive program for the average-case

quicksort recurrence and a DP solution as well.

The recurrence is

T(0) = 1\\

T(N) = (2/N)i=0ΣN-1 T(i) + N

or

function T(N)

if T==0 return 1;

sum = 0;

for i = 0 to (N-1)

sum += T(i);

return (2/N)*sum + N

but

We've seen this tree before but I can't recall where...

Clearly our table should contain C(0), C(1), etc. and Weiss gives a

double for-loop O(N2) solution that can be improved to O(N).

Longest Common Subsequence Problem

We notice two things:

1. Suppose that two sequences both end in the same element. To find their

LCS, shorten each sequence by removing the last element, find the LCS

of the shortened sequences, and to that LCS append the removed

element.

2. Suppose that the two sequences X and Y do not end in the same

symbol. Then the LCS of X and Y is the longer of the two sequences

LCS(Xn,Ym-1) and LCS(Xn-1,Ym).

These lead to equations:

LCS (Xi,Yj) = 0 if i=0 or j =0

= LCS(Xi-1,Yj-1) | xi if

xi = yi

= max[LCS(Xi,Yj-1), LCS(Xi-1,Yj)]

if xi ≠ yi

They in turn dictate what to put in the table and how to use it.

Not difficult but... also, tracing back through the table to get the

LCS is interesting...

Wikipedia to the Rescue

Ordering Matrix Mpys

Consider a column N-vector A and the multiplication AATA.

Done in the order (AAT)A, we get an NxN matrix times A.

Done in the order A(ATA). we get A times a scalar. Hmmm.

The number of possible orders for a string of matrix multiplications can

be expressed by the recurrence

T(0) = 1

T(N) = 1∑N-1 T(i)T(N-i)

The solutions are the Catalan numbers, which look like

1, 1, 2, 5, 14, 42, 132, 429, 1430, 4862, 16796,...

and have several nice closed forms involving factorials, binomial

coefficients, or products. Asymptotically, they grow at

T(N) ≅ 4N / (N3/2 √ π).

Nice article in Wikipedia.

DP solution represents the sizes and L-to-R arrangement of matrices to

be multiplied with an array c, which only need be N long

since after knowing the leftmost matrix's row count (stored in c[0]),

we know its cols must be same as 2nd matrix's rows, etc. So

c = [3 4 5 2 7]

represents string of matrices sized (3,4), (4,5), (5,2), (2,7).

Then our optimization equation (vital for DP solution) says that the

optimal solution for a subsequence of matrices running from left

to right, the minimum number of multiplies

Mleft, right in an optimal ordering has left <

right and

Mleft,right = mini(Mleft,i

+ Mi+1,right + c[left-1] ⋅ c[i] ⋅ c[right])

for i such that left ≤ i < right.

There are clearly only about N2/2 different choices of

left and right for Mleft,rights, so a table could

work:

in fact only need an upper-triangular table.

Also if right-left = k, then the only Mx,y values needed

to

compute Mleft, right satisfy y-x < k -- that implies the

order of filling up the table.

For N matrices, an NxN two-dimensional table can store all the needed

sub-results (and final answer) with a

simple O(N3) program (Fig. 10.46).

Possibly

this code might be a good

control structure template for table-construction and use

for the next programming project --

optimal binary search trees.

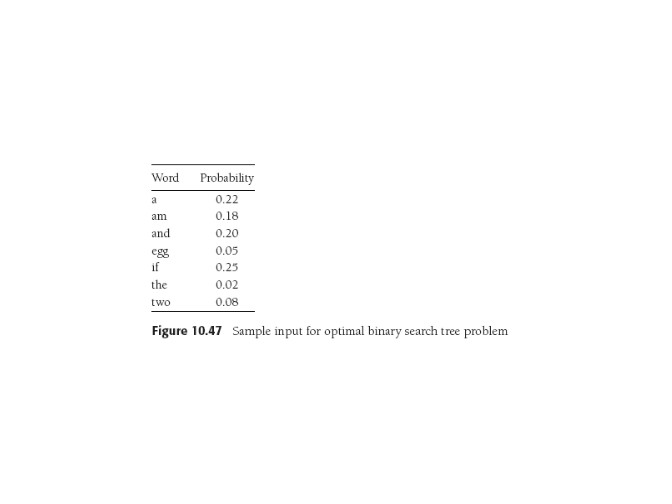

Optimal BST -- Last Project

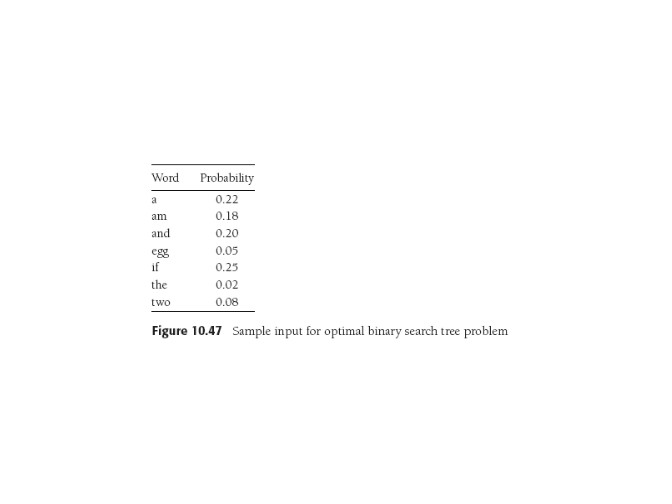

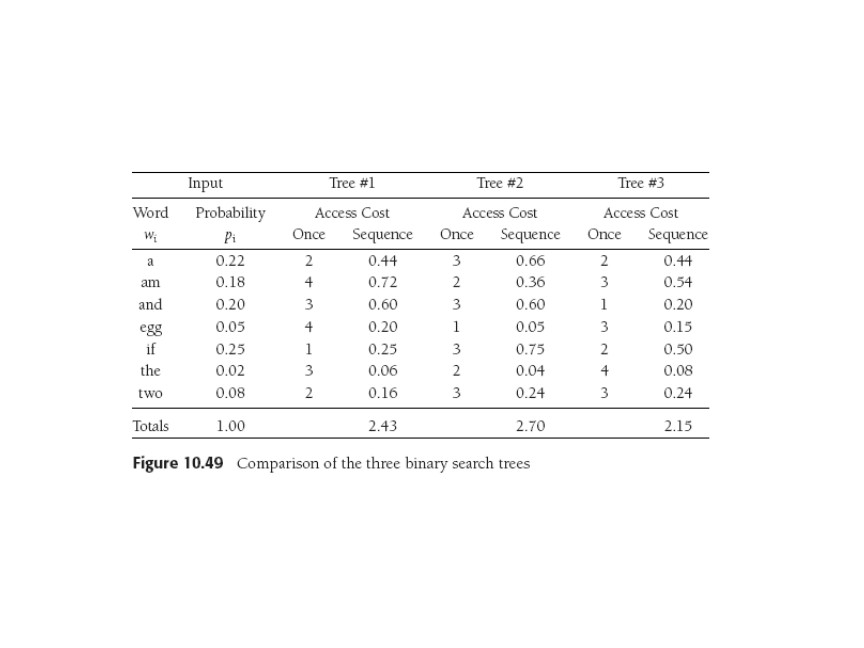

Here's a set of words and associated probabilities:

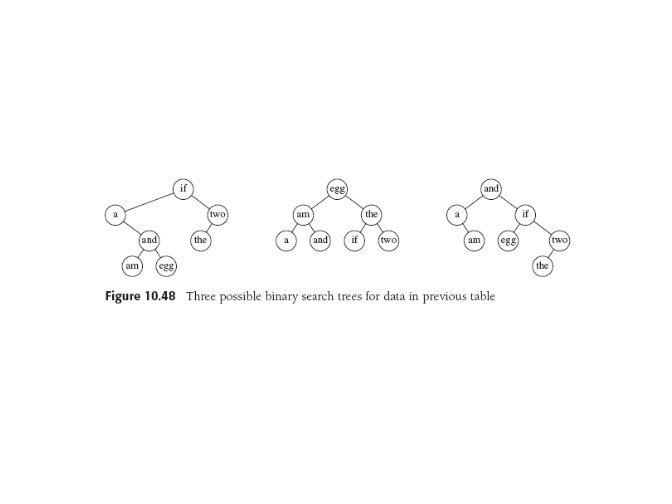

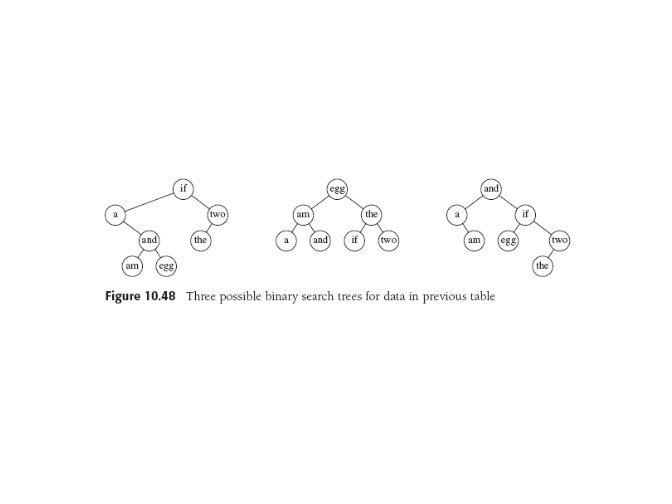

We've seen lot of search trees, including balanced and Huffman.

Let's build one with this data using a greedy strategy:

highest-probability nodes are highest in tree. As competition,

we consider a perfectly-balanced tree. BUT....neither of these

is optimal.

Below: greedy, balanced, optimal BSTs for above data.

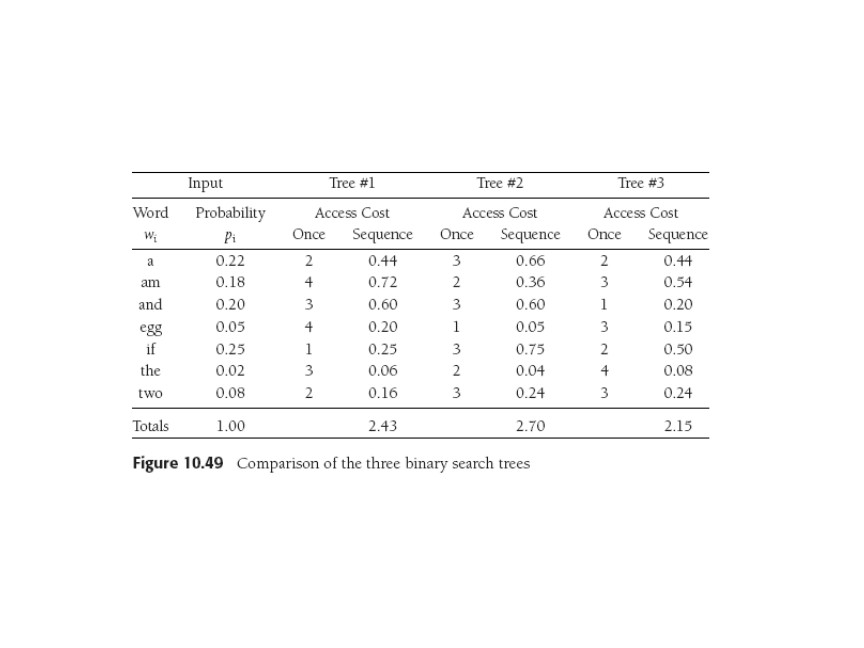

Optimal? In the sense of minimimizing access cost, which we can

compute probabilistically as

∑i ( pi

(1 +di)),

since it costs (1 +di) accesses to find an element at depth

d. Here are the figures.

Finding Optimal BST

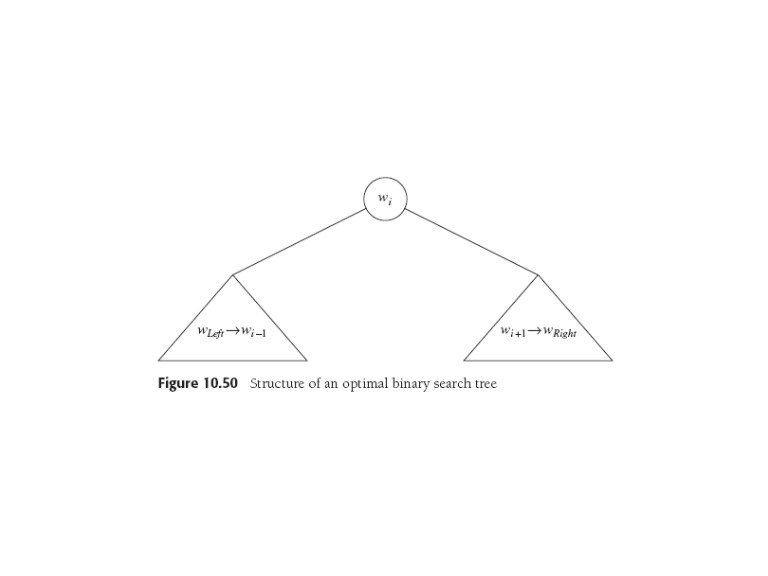

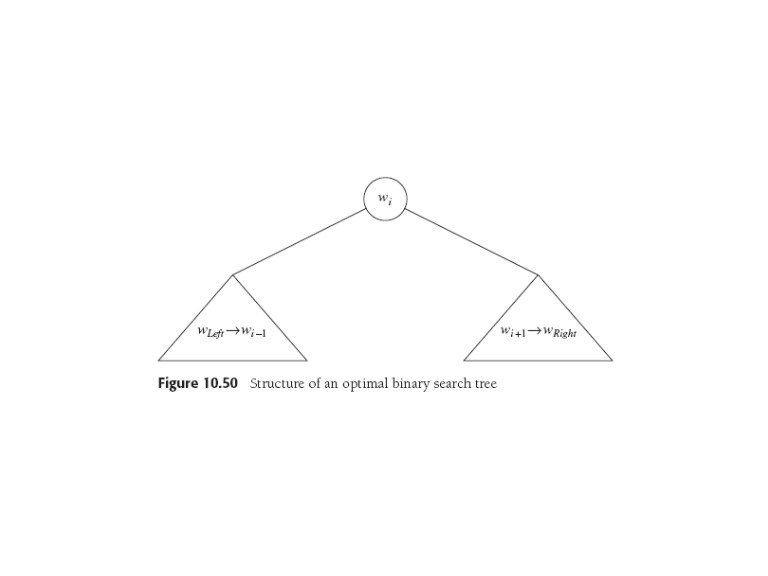

Two key observations, which sound hauntingly familiar:

1. To be BST, if the tree entries are sorted and labeled w(L) to w(R), then if the

root of the optimal tree is some w(i), the left subtree must

contain w(L),..., w(i-1) and the right one must have w(i+1),...,w(R).

2. Both these subtrees must be optimal too (aha!), else could be

replaced by better ones and overall performance would improve. Here's

the picture:

From which it's not hard to derive the formula for the cost of that

tree C(L, R), given we know the cost of each subtree and that it costs

on the average pi extra for:

- the root.

- the added depth of 1 for all the other entries in the two subtrees

now at one level down.

C(L,R) =

min(L≤i≤R) [C(L,i-1)+C(i+1,R)+∑jpj].

The last sum is over j s.t. L ≤ j ≤ R .

It captures the fact that all the nodes in

the

left and right subtrees are deeper by one level, and the probability

of the root must be added in too.

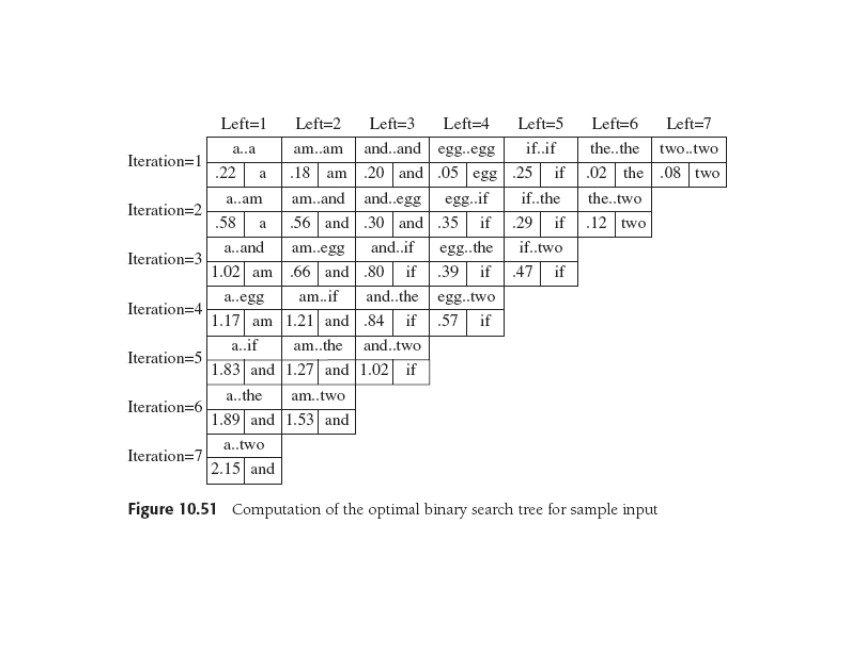

The optimal solutions to the subproblems C(.,.) above are to be found in the

table, which will be upper triangular, and the other term is

constant over the minimization. The minimization process computes the resulting

formulae for each i and puts the minimum into the table, along with

the root that produces the minimum cost.

So that's the hidden work you don't see when

looking at the table: each entry is from a minimization (sometimes trivial).

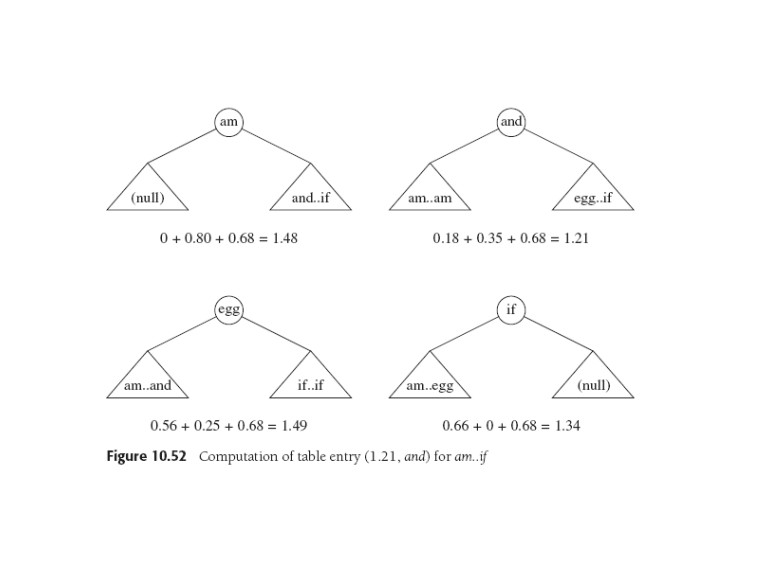

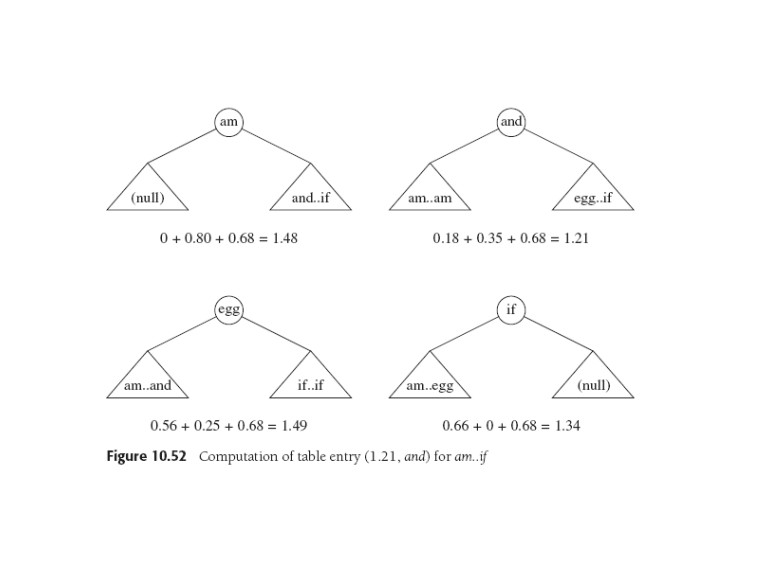

The hidden work, for example, looks like this, with the two subtree

costs followed by the common

"root + 1-deeper" cost of 0.68).

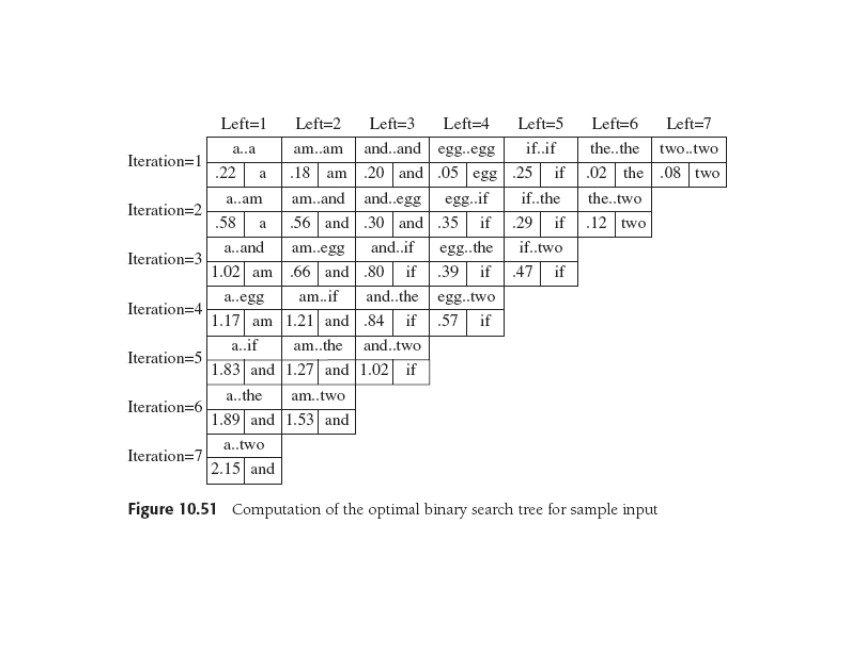

Below is the table. For every subrange of words in the original

list,

we have the cost and root of the optimal BST for its tree.

The previous minimization produced the entry "am...if" in iteration 4.

Where does the final tree come from? It's not obvious from the table,

but the root is.

During the last minimization, the lowest cost is the tree that puts

and at the root, so the original list tells us that

a...

am must be in the left subtree, and

egg...

two in the right. Then the table can be used to find these

ranges, thus the roots of their trees, and recursively reconstruct the whole tree. Or maybe there's a

way to keep all we need as we go. Perhaps the code in Fig. 10.46 will

help,

because this approach, like the matrix multiplication order example,

is an O(N3) algorithm that may be improved to N2.

Last update: 11.26.14