Automatable Inference: Resolution

Motivation and Outline

Non-clausal inference seems daunting. Due to work of

Robinson, we have RTP (Resolution Theorem Proving) as a

computationally-possible semi-decidable, complete, sound system

(ideas by Herbrand in the proof).

After conversion to CNF, FOPC clauses look almost exactly like PC

clauses with variables and functions and predicates. Thus resolution

is very close to what we've seen. The main technical issue is

the matching of "cancelling" clauses, via the unification we

learned for Prolog, so really this material is mostly familiar.

The two main techniques are resolution and unification,

at least the second of which we know from Prolog. Coming up:

- Running Start -- Revisit last time

- Historical, Methodological Background

- The resolution inference rule

- Resolution proofs in PC

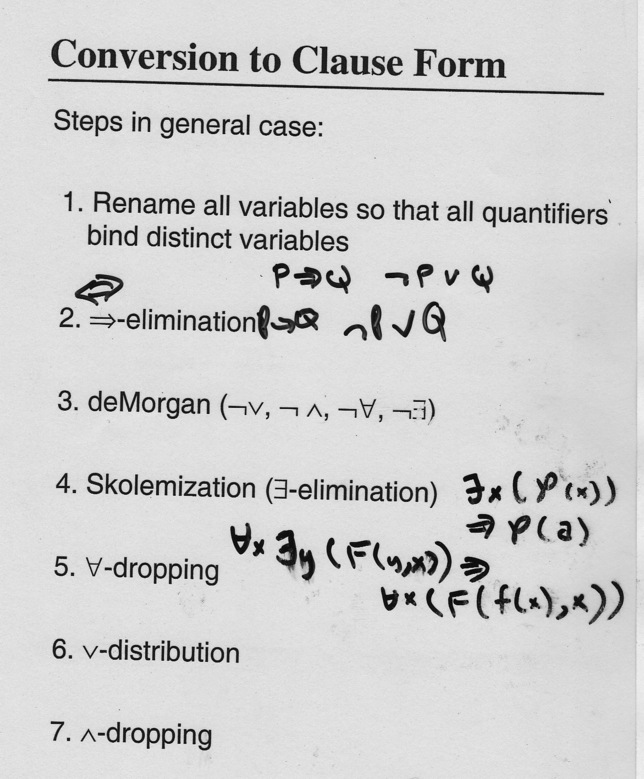

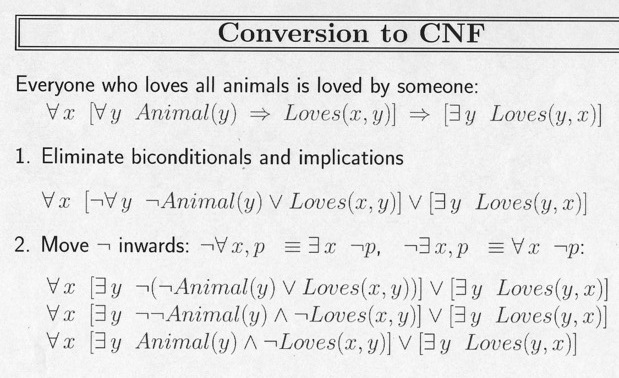

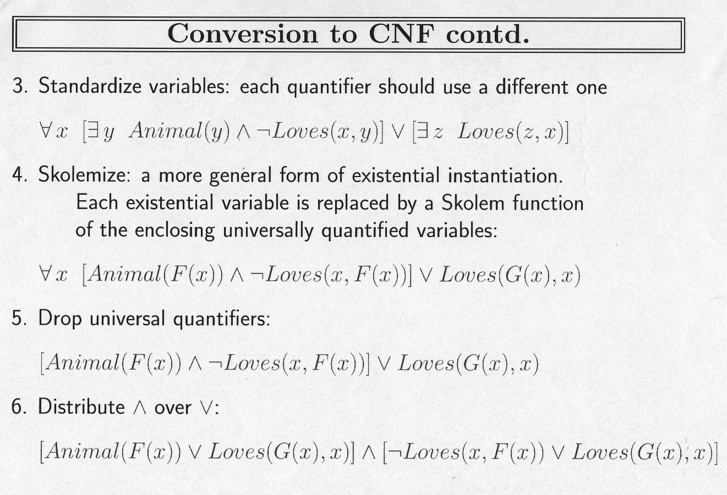

- Recap of CNF conversion with Skolemization (the third, last

trick we need).

- Resolution proofs in FOL

John Alan Robinson and Resolution

Robinson

"Robinson was born in Yorkshire, England in 1930 and left for the

United States in 1952 with a classics degree from Cambridge

University. He studied philosophy at the University of Oregon before

moving to Princeton University where he received his PhD in philosophy

in 1956. He then worked at Du Pont as an operations research analyst,

where he learned programming and taught himself mathematics. He moved

to Rice University in 1961, spending his summers as a visiting

researcher at the Argonne National Laboratory's Applied Mathematics

Division. He moved to Syracuse University as Distinguished Professor

of Logic and Computer Science in 1967 and became professor emeritus in

1993."

--Wikipedia

Resolution

- Simple, efficient, sound, complete... dominates AI reasoning,

automated logical proof

- Produces proofs by refutation: to prove S from

KB,

add ∼ S to KB and show that

set of clauses

is inconsistent.

- CNF ("Clause Normal Form" or "Conj. Nor. Form") means simplicity:

no issues of how to represent knowledge

- Resolution only rule: no decisions: simplicity

- Every FOL sentence convertable to CNF.

- By keeping track of the substitutions (of values for variables)

in the proof, "proved or disproved" can be extended to "question

answering"

(e.g. Prolog).

- Search required, however: which two clauses to resolve? Result

makes another clause (in general), so the KB typically grows,

complicating search... hence generations of research on RTP proof

techniques.

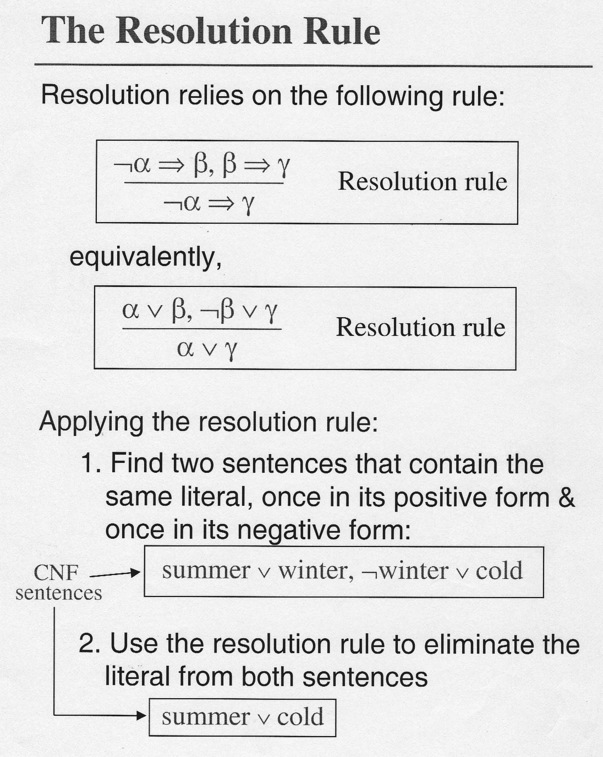

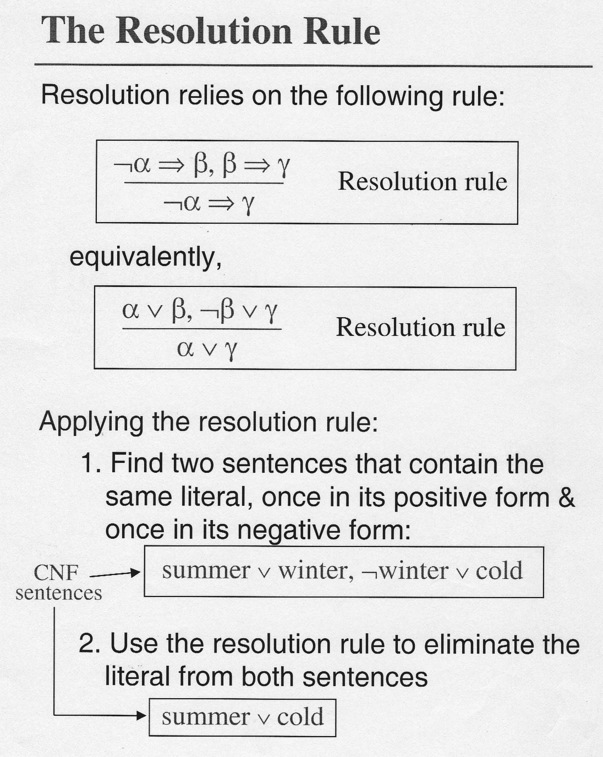

Resolution Rule

The scan below shows how Resolution is closely related to the true inference

rule (mislabeled here) of "transitivity of implication").

Thus "unit" resolution produces a new clause with one less term than

its longer parent. As we have seen, it's clostly related to modus ponens.

Modus Ponens:

(A ⇒ B), A

-----------------

B

Resolution:

(∼ A ∨ B), A

-----------------

B

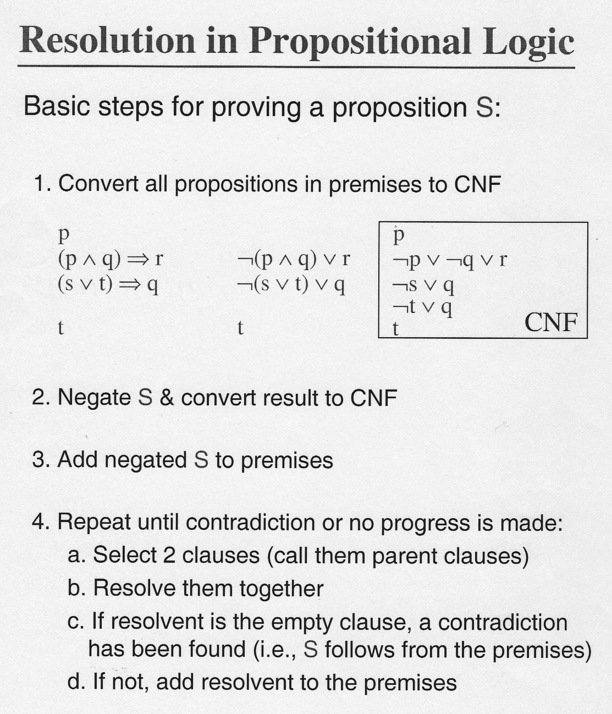

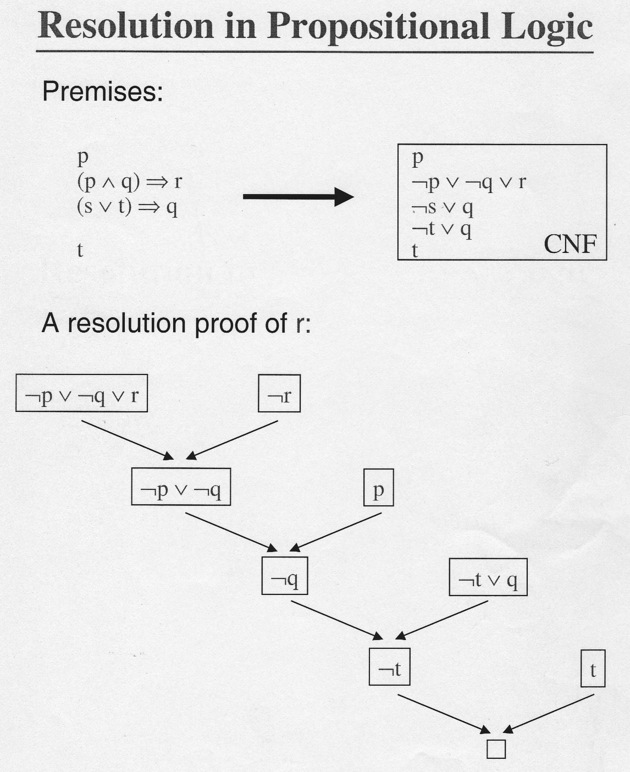

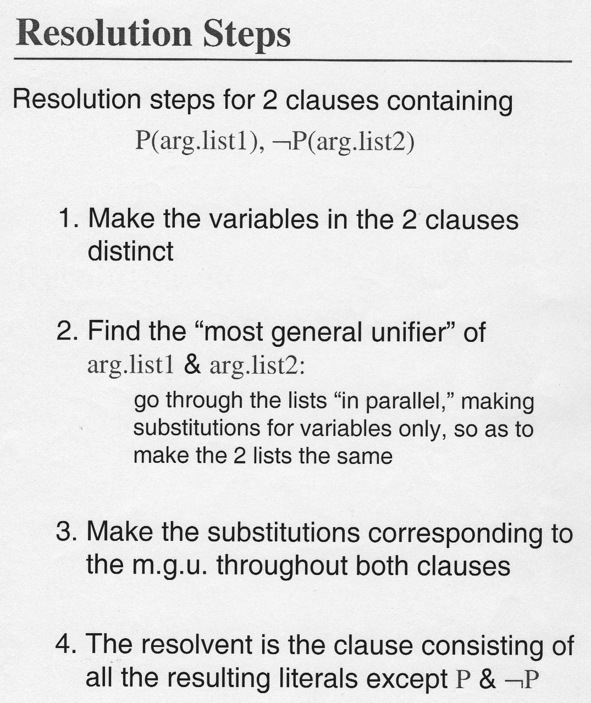

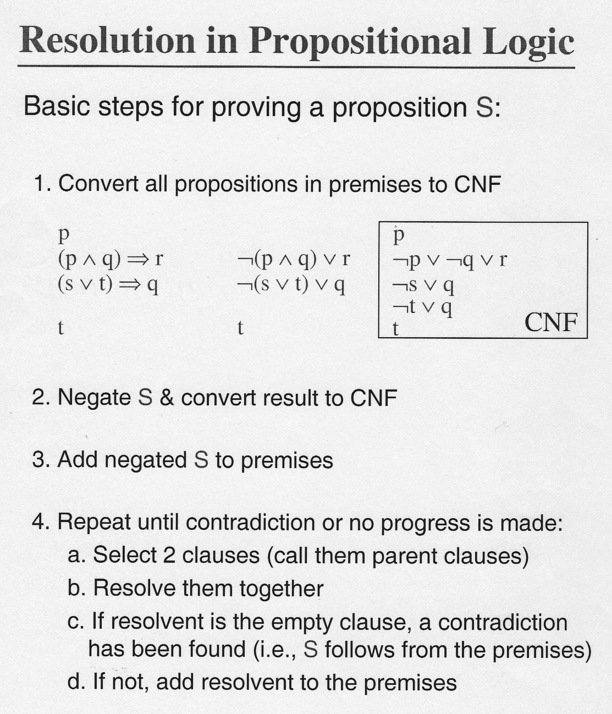

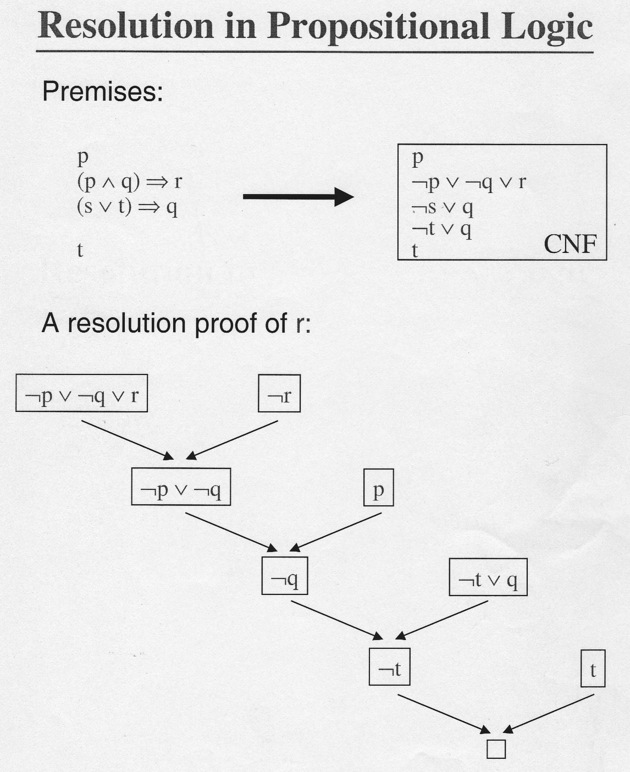

Procedure

Resolution in Predicate Logic E.g. 1

Resolution in Predicate Logic E.g. 2

Given

1. ∼ R

2. ∼ (P ∧ ∼ Q)

3. ∼ P → (R ∧ S)

Prove 4. Q by resolution.

Clauses:

1 → ∼ R (C1)

2 → ∼ P ∨ Q (C2)

3 → P ∨ R (C3a)

P ∨ S (C3b)

3 → ∼ Q (C4)

Resolutions:

C1, C3a → P (C5)

C5, C2 → Q (C6)

C4, C6 → ∅ (null, 'box')

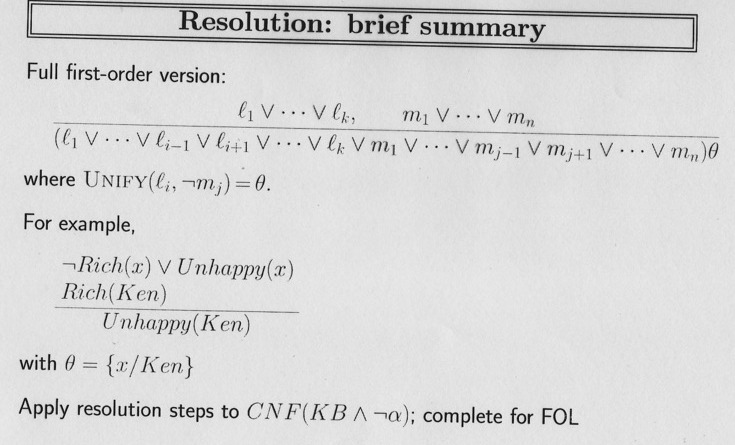

Resolution in First-Order Logic

To generalize to FOL from PC must account for

- Predicates

- Unbound variables

- Existential, universal quantifiers

Ans. First convert to CNF, then unify variables, then apply resolution.

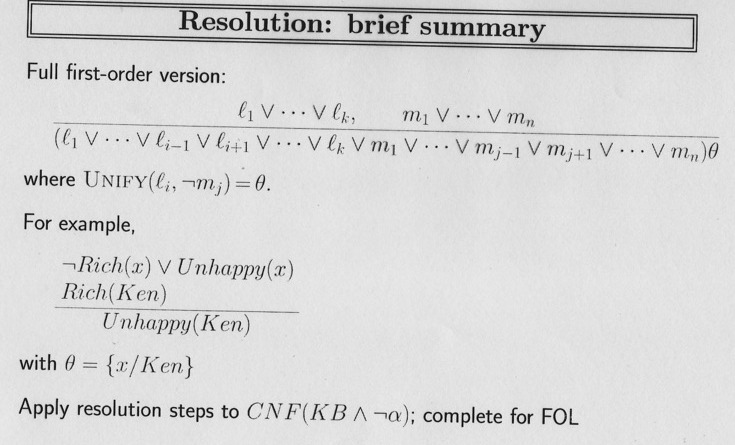

Resolution Rule

Resolution in FOL E.g. 1

Resolution is only defined between clauses.

Conversion algorithm from non-clausal form to CNF coming up soon.

\ is the substitution operator and the substitution set

in {curly brackets} is the most general unifier of the two clauses.

at-home(x) ∨ at-work(x) , ∼ at-home(Mary)

unified by {x\Mary}

at-work(Mary)

Parent(x,y) → Older(x,y), ∼ Parent(Lulu, Fifi)

{x\Lulu, y\Fifi}

Older(Lulu, Fifi)

Loves(x, Mother-of(x)) ∨ Psycho(x),

∼ Loves(Bill, y) ∨ Sends-flowers(Bill, y)

{x\Bill, y\Mother-of(Bill)}

Psycho(Bill) ∨ Sends-flowers(Bill, Mother-of(Bill))

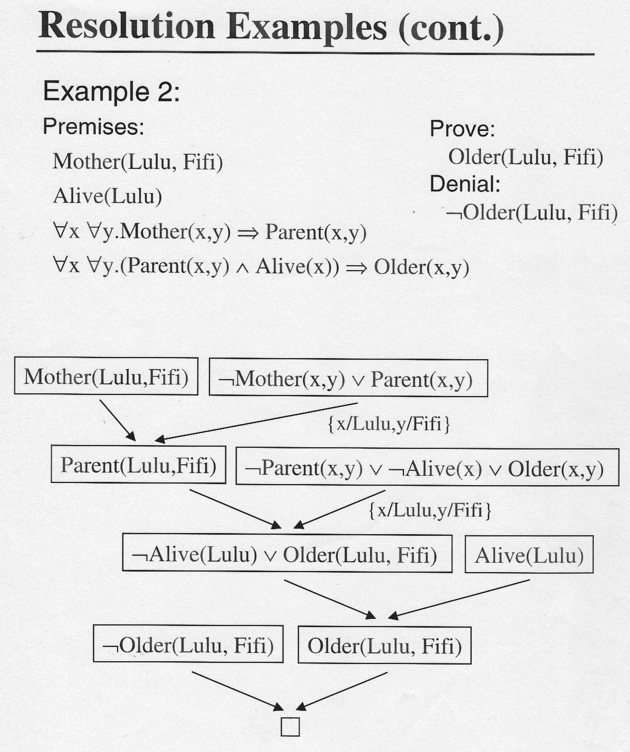

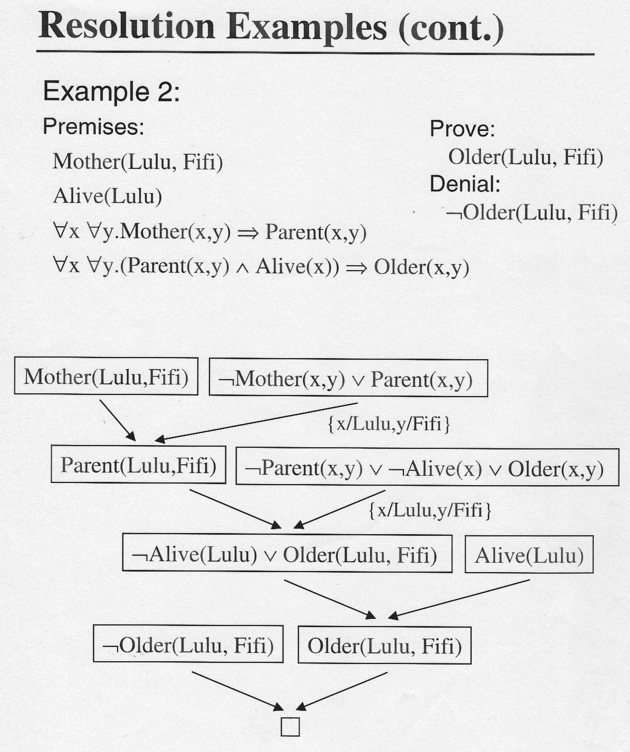

Resolution in FOL E.g. 2

Simple proof from English:

If something is intelligent (I) it has common sense (H)

Deep Blue does not have common sense

Thus Deep Blue (D) is not intelligent.

Clauses:

1. ∀ x.I(x) → H(x) → ∼ I(x) ∨ H(x) (C1)

2. ∼ H(D) → ∼ H(D) (C2)

3. ∼ I(D) → I(D) (C3), deny conclusion

Inference

C1, C2

{x\D}

∼ I(D) (C4)

--

C3, C4

∅

Alternate notation:

% specifying literal id's the variable

r[C1b ('2nd literal, Clause 1), C2]

r[C3, C4]

One more time

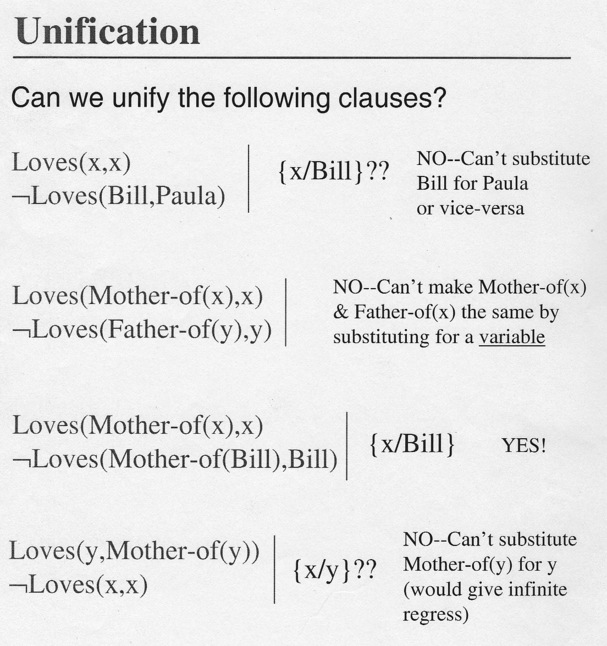

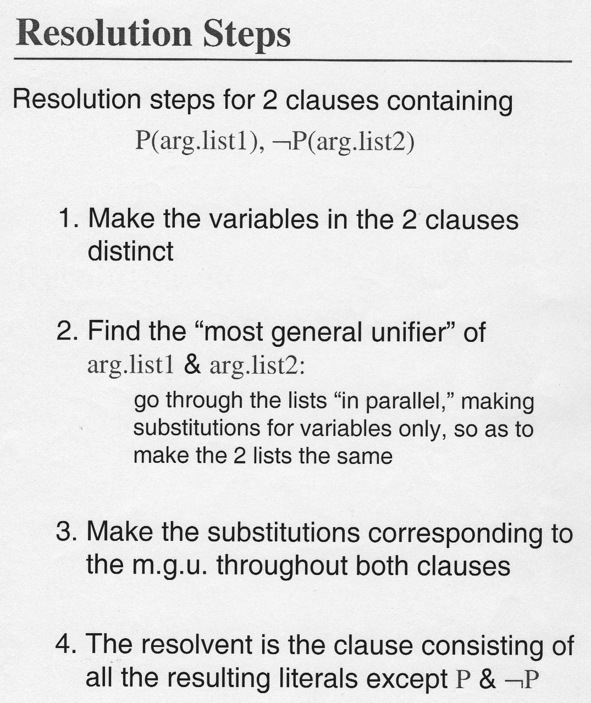

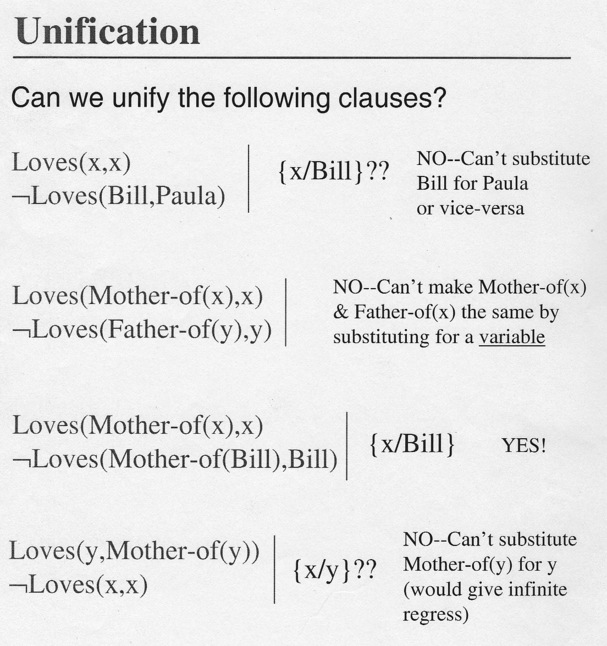

Simple Unification Egs.

The Unification Algorithm

The substitutions ( e.g. {x\D}) unify the clauses,

that is, they become perfect matches. If such unification is not

possible, resolution cannot be applied. We're quite familiar with

unification (two-way matching) in Prolog: the = operator.

The unification algorithm recursively compares the structures of the

clauses to be matched, working across element by element.

Matching individuals, functions, and predicates must have

the same names, matching functions and

predicates must have the same number of arguments, and all bindings of

variables to values must be consistent throughout the whole match.

We know how to unifiy, if only because these problems are naturals on

exams. E.g.

Unify

(L(x,y, f(A,y), D) with

(L(z, C, f(w ,u), v), (variables in lower-, constants

in upper-case. That's normal in the literature, but

recall Prolog's capitalization rules for funcs, vars and

consts

are opposite, so if

we wanted to check the above we'd see

l(X,Y, f(a, Y), d) = l(Z,c,f(W,U),V).

X = Z, Y = c, W = a, U = c, V = d.

Typical Problem (Len Schubert)

P(s, G(s), t, H(s,t), u, K(s,t,u))

∼ P(v, w, M(w), x, N(w,x), y)

% the ∼ is sign we want to

% unify so as to resolve

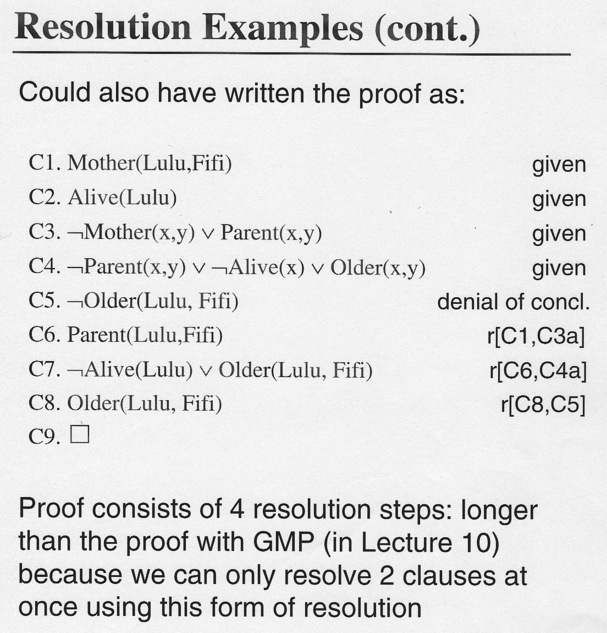

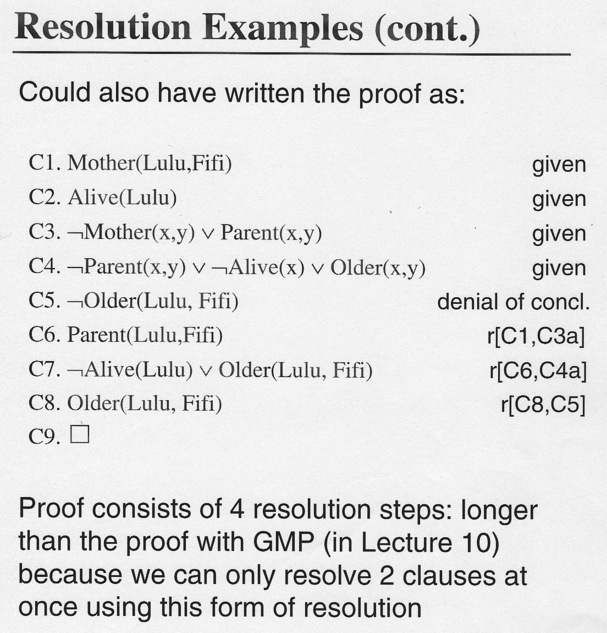

Resolution Proof in FOL E.g. 3

Resolution Proof in FOL E.g. 3

Different Notation

I count three resolutions...

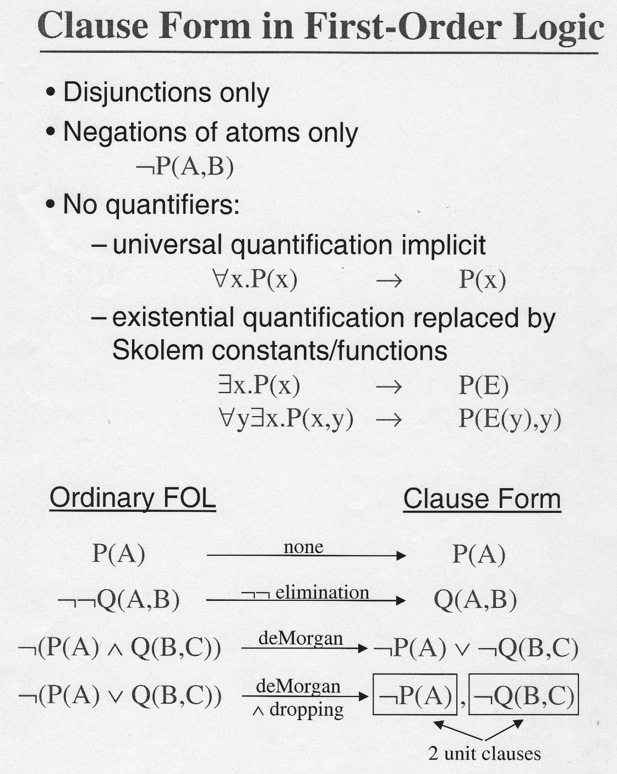

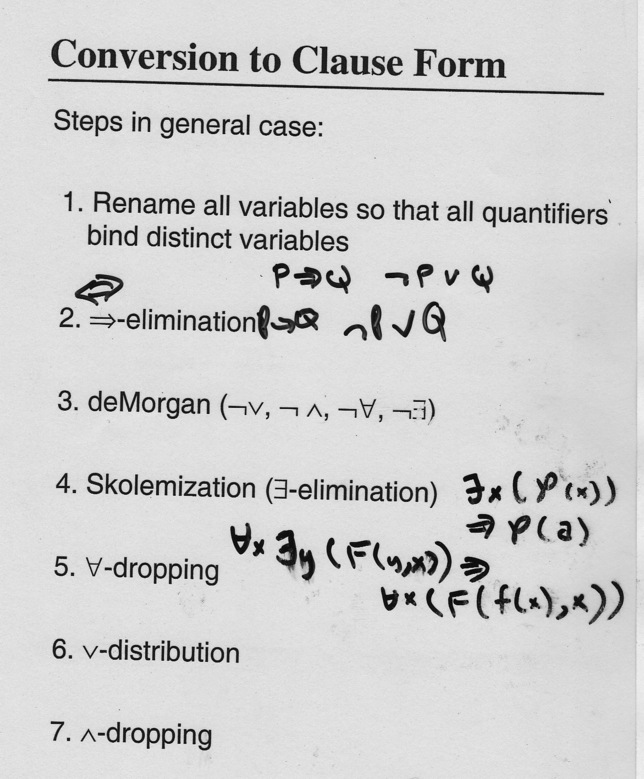

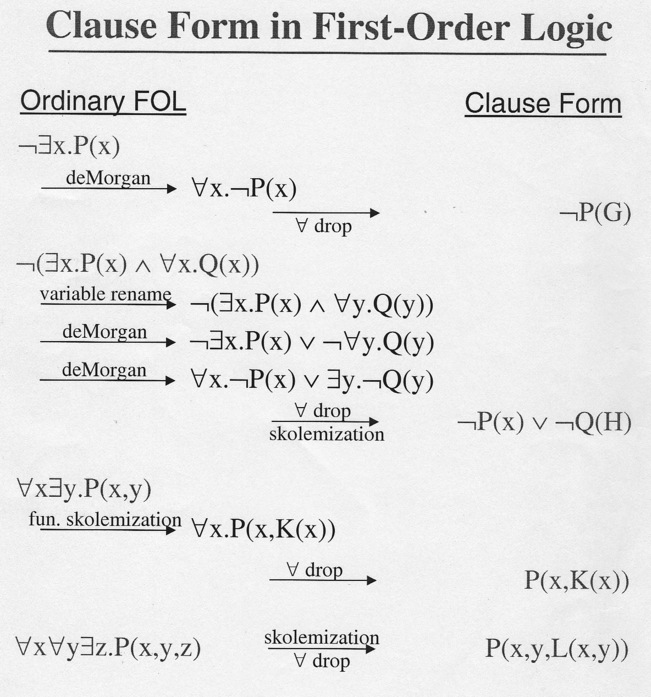

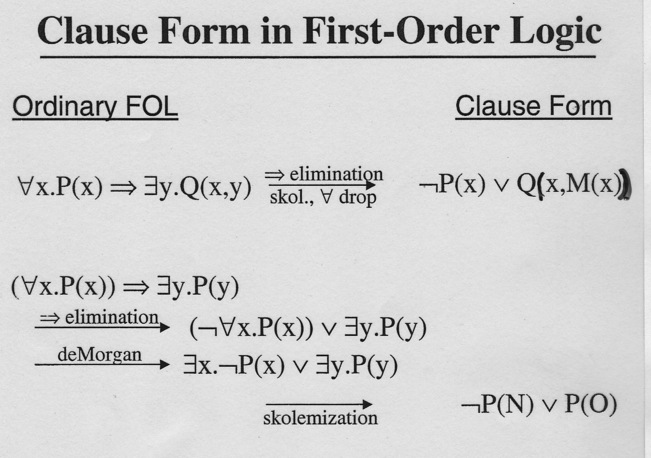

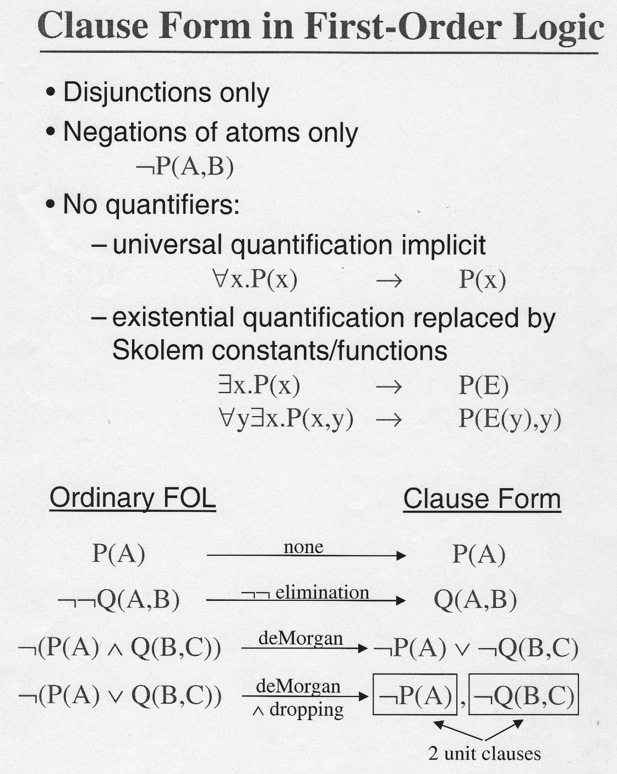

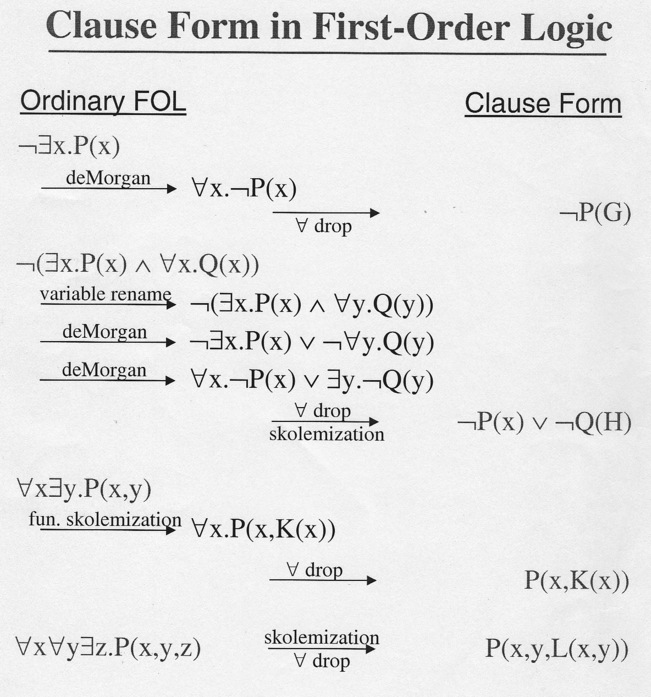

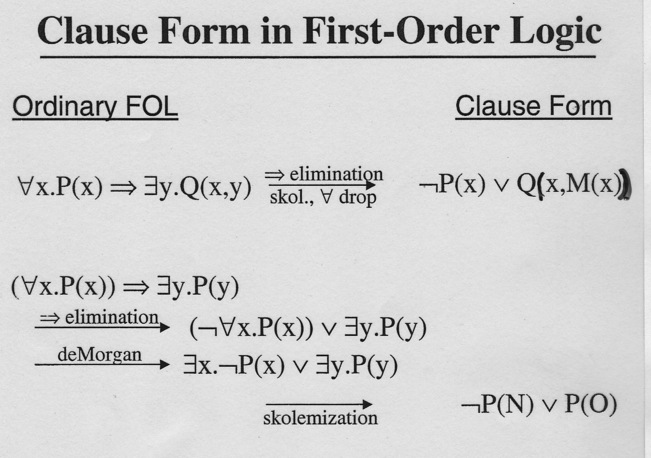

Clause form in FOL 1

Clause form in FOL 2

Clause Form Conversion E.g.

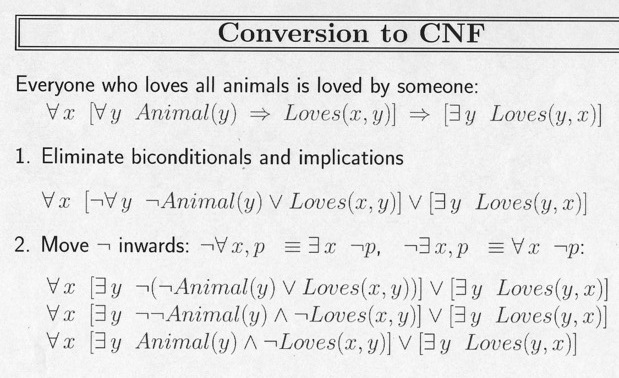

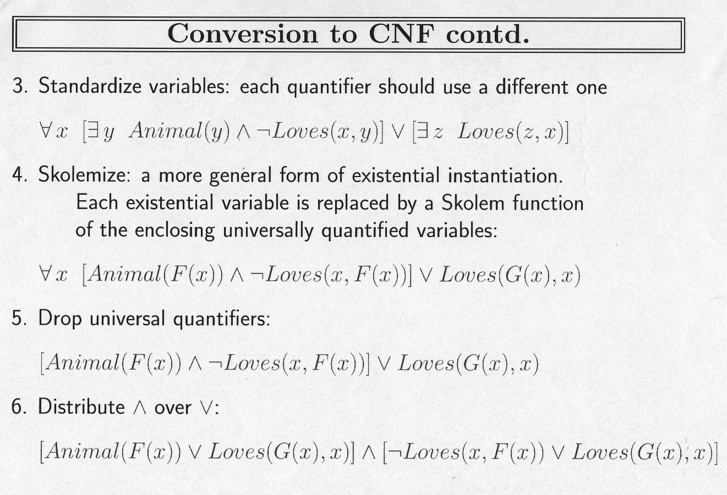

EXISTENTIAL ELIMINATION: SKOLEMIZATION

Existential Quantification asserts at least one individual exists that

satisfies the predicate. The trick is just to make up a name for that

individual and keep track of him like any other individual. If

someone asks ``who is that?'', the answer is ``the one who exists

according to the Existentially Quantified statement.''

∃ x (P(x)) → P(Jerry)

This Skolem constant idea also works for ∃ acting over

∀ .

∃ x ∀ y (P(x,y)) → ∀ y P(Fred,y)

Extending the idea to a Skolem function

deals with cases of ∀ acting over

∃, in which case the particular individual who exists depends on

just who gets instantiated in the "forall"

E.g. "Everyone has a

biological father" needs a Skolem function to assure that the father making the

sentence true depends on who gets substituted in for 'Everyone': Bob's

father may not be Alice's.

∀ x ∃ y (Father(y,x)) →

∀ x Father(father-of(x), x)

Note that such a function has to exist because it is guaranteed by the

∃ in the premise. However we may have no idea what any of its

values are (do I know your father?).

Clause form in FOL E.g. 1

Misprint in 1st line. ¬P(G) should be ¬P(x)

Clause form in FOL E.g. 2

FOPC PROOF EXAMPLE

We may have seen this example proved by forward and backward chaining

earlier...

The law says that it is a crime for an American to sell weapons to

hostile nations. The country Nono, an enemy of America, has some

missles, and all of its missles were sold to it by Colonel West, who

is an American.

To Prove: West is a criminal.

FORMALIZE

``it is a crime for an American to sell weapons to

hostile nations.''

American(x) ∧ Weapon(y) ∧ Sells (x,y,z) ∧ Hostile(z)

→ Criminal(x).

``Nono... has some missles''

∃ x. Owns(Nono,x) ∧ Missle(x): by EElim to:

Owns(Nono, M1)

Missle(M1)

`` all of its missles were sold to it by Colonel West''

Missle(x) ∧ Owns(Nono,x) → Sells(West, x,Nono)

``West, who is American...''

American(West)

``The country Nono, an enemy of America''

Enemy(Nono, America)

FORMALIZE, CONT.

As is usual, to understand the story or do the proof we need to know

extra taken-for-granted facts.

Missles are weapons

Missle(x) → Weapon(x)

Enemies count as hostile:

Enemy(x, America) → Hostile(x)

This knowledge base contains no functions --- this makes inference

easier. It's also all Horn clauses. Nine resolutions are needed...

pretty big binary tree.

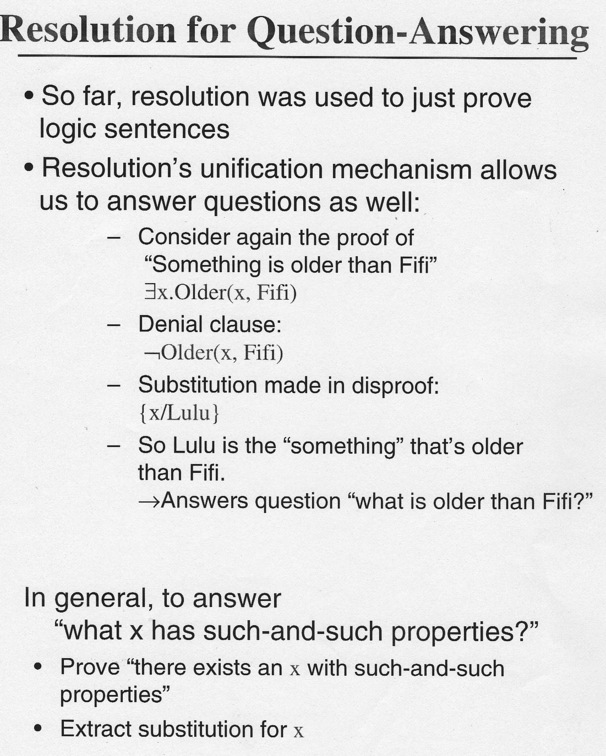

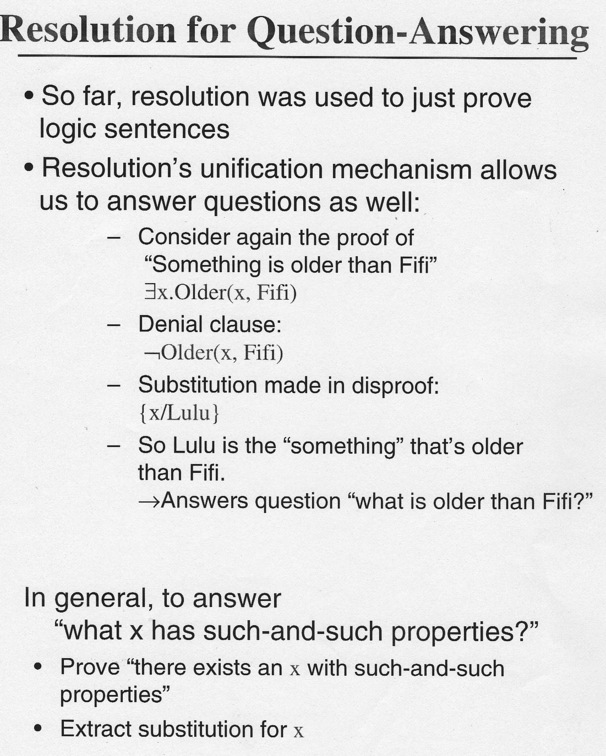

Question-Answering and Negation of Conclusion

Back in Proof E.g. 3, imagine we wanted to prove "Something is older

than Fifi". That is

∃ x.Older(x,Fifi).

Denial: ∼ ∃ x.Older(x,Fifi), or

∀ x. ∼ Older(x,Fifi).

In clause form: ∼ Older(x,Fifi).

Now the last step in that proof would have been:

Older(Lulu,Fifi), ∼ Older(x,Fifi)

{x\Lulu}

∅

So yes, something is older, and if we keep track of substitutions as

in question-answering mode, it's Lulu.

BUT! Don't make the mistake of first forming the clause from

the

conclusion and then denying it!.

Conclusion: ∃ x.Older(x,Fifi).

Clause form: Older(C, Fifi)

Denial: ∼ Older(C, Fifi)

This last clause will NOT UNIFY with

Older(Lulu,Fifi)!

RTP Recap

- Fact: Resolution is universal, sound, complete.

- Fact: Using it, we can detect inconsistent set of clauses in finite time.

- So...Given set of FOL sentences, (∀, ∃, &rHarr, etc.)

- Convert sentences to CNF

- Assert negative of desired conclusion, add to clauses. (not in

other order!)

- Resolve your head off

- Use Heuristics, prayer?

- Find ∅ (you hope)

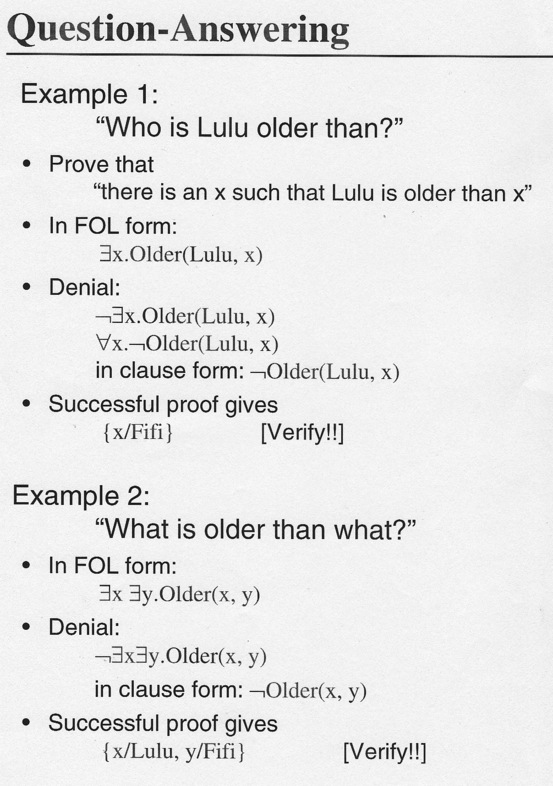

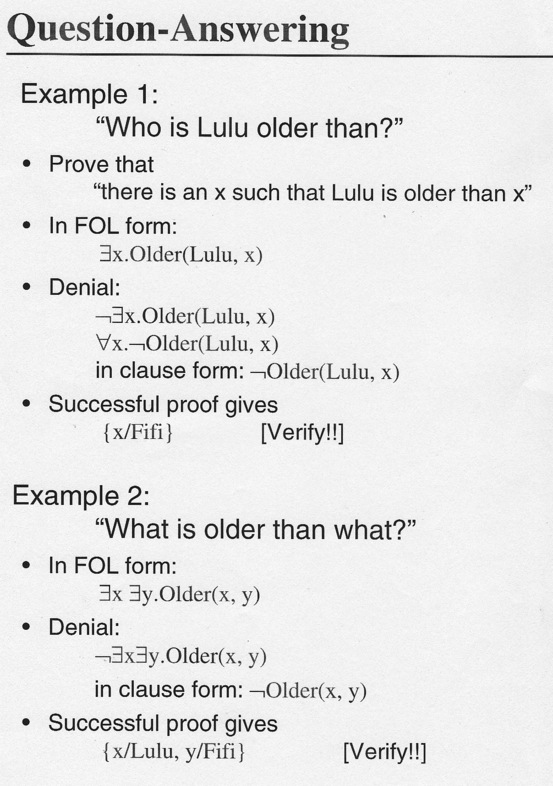

Question-Answering 1

Question-Answering E.g.

Question-Answering 2 (mostly Len Schubert)

Yes-No Questions: Make "parallel" attempts to prove or disprove

the proposition: If P? is the proposition, refuting

¬ P means "Yes" and refuting

P means "No".

Answer Extraction: With "Wh- questions" (or "fill in the

blank" questions), method is to tack ∨ Ans(x) onto the denial,

where x is the variable whose instantiation answers the

"question" (usually the assertion "There is an x such that it's an

answer").

Then try to derive

∅ ∨ Ans(my_answer).

Planning:

We can also see that a plan for a sequence of actions can be

formalized in FOL, and then we can assert "you can't get to the goal

from this initial state". The contradiction to that is a set of

bindings,

as in question answering, that show how you can achieve the goal from

your starting state. Of course, need to represent time somehow.

Answer Extraction Example

Carol's wherever Bob is. Bob's at the dance. Where's Carol?

Formalize:

1. ∀ x.At(Bob,x) → At(Carol,x)

2. At(Bob, Dance)

∃ x. At(Carol,x)

% "Question": Carol's at x for some x

∼ ∃ x. At(Carol,x) % Denial. Eliminate ∃:

3. ∀ x. ∼ At(Carol,x) % Denial

Clauses, Refutation and Answer Extraction

C1. ∼ At(Bob,x) ∨ At(Carol,x) % from 1.

C2. At(Bob, Dance) % ≡ 2.

C3. ∼ At(Carol,x) ∨ Ans(x)

% Denial + Extraction Clause

C4. At(Carol, Dance) % r[C1, C2]

C5. ∅ ∨ Ans(Dance) % r[C3, C4]

"Good" and "Bad" True Answers

Everyone works for someone. Bob works for Carol. Who does Bob work

for?

Formalize:

1. ∀ x ∃ y.Works-for(x,y)

2. Works-for(Bob,Carol)

Q: ∃ x.Works-for(Bob,x)

Denial: ∀ x. ∼ Works-for(Bob,x)

First Proof:

C1. Works-for (x, employer-of(X))

% from 1., with Skolem function

C2. Works-for(Bob,Carol) % ≡ 2

C3. ∼ Works-for(Bob,x) ∨ Ans(x) % Denial + Ans. Ext

C4. ∅ ∨ Ans(employer-of(Bob)) % r[C1, C3]

Nothing wrong with this, but may be considered less satisfactory than

Second Proof:

C4' ∅ ∨ Ans(Carol) % r[C2, C3]

Don't need semantics to see it's probably a good idea to try to get

Skolem-free answers by preferring inferences that don't

substitute Skolem constants of functions into the answer. Sometimes

not possible of course.

Getting Multiple Answers

Probably easiest way to imagine how to do

this is Prolog's backtracking

algorithm: ; causes a fake fail, which

forces backtracking to see if there is another instantiation that

yields another answer.

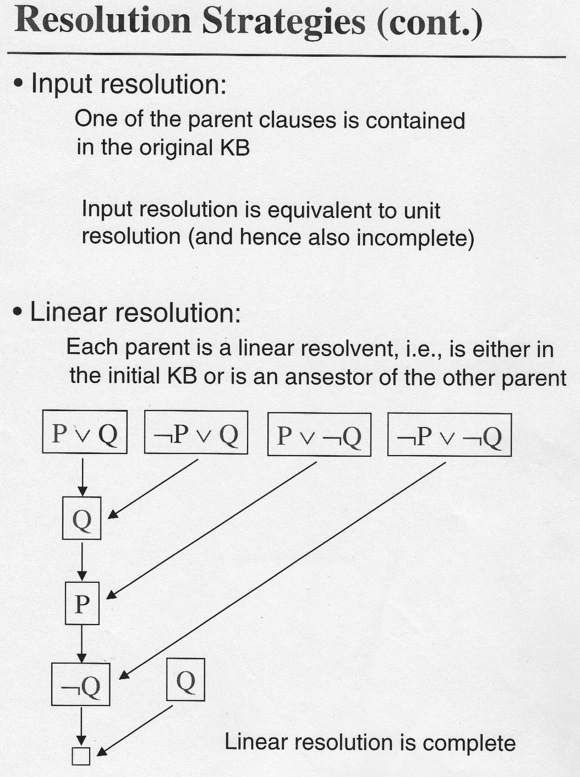

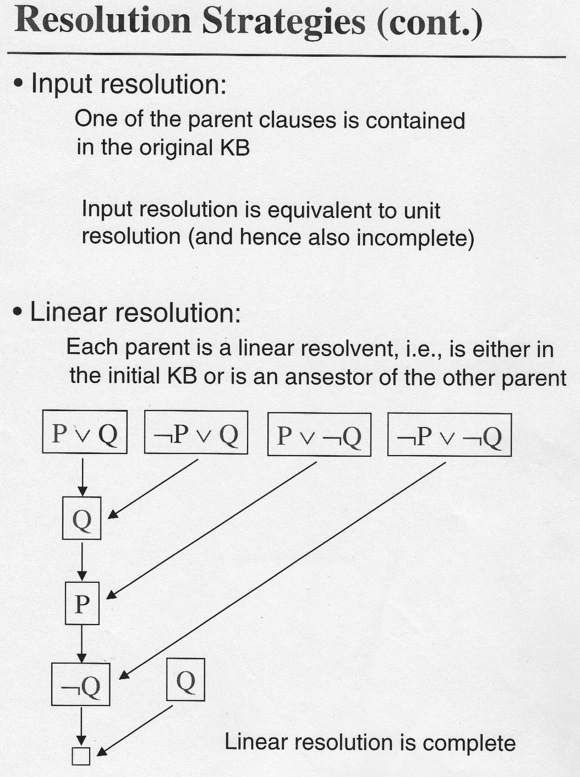

Resolution Strategies

Problem: what resolutions to do? Easy to flail around with rewriting

rules: algebraic "simplification" has rules like

A+0 = A ,

as well as "+ is commutative", so LOTS of useless or harmful choices!

Further, every resolution makes KB larger: all clauses are true and

none goes away (in 'monotonic logic', which we're talking about).

Given we've known what to do since 1965, HOW to do it is still a

research issue, like AI in general (by definition). Some ideas...

- Backward chaining: (e.g. Prolog, but Horn clauses are a simpler

(linear) inference problem anyway.) Reason backward from goal in

hopes that subgoal solutions don't interact 'badly'.

- Unit resolution: One of the parents is chosen to be

a single-literal clause ("fact"). Resultant clause is one shorter

(closer to null clause?). A greedy method, and not complete for FOL.

- Horn Clauses: Pretty general (Prolog), inference linear

in size of KB.

- Set of Support: Given a set of clauses Γ, a set of

support resolvent of Γ is one whose parents are either clauses in

Γ or descendents of such clauses. So...always use the

denial clauseor a descendent of the denial clause as one parent. Idea

is to focus proof by always using (maybe indirectly)

what we're trying to prove,rather than grinding

true-but-maybe-irrelevant

KB facts and rules together.

Two More Strategies

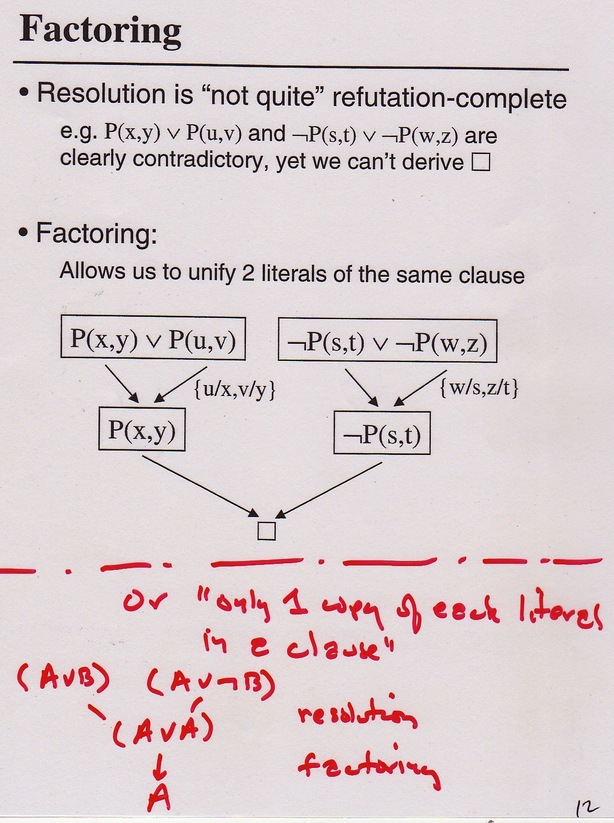

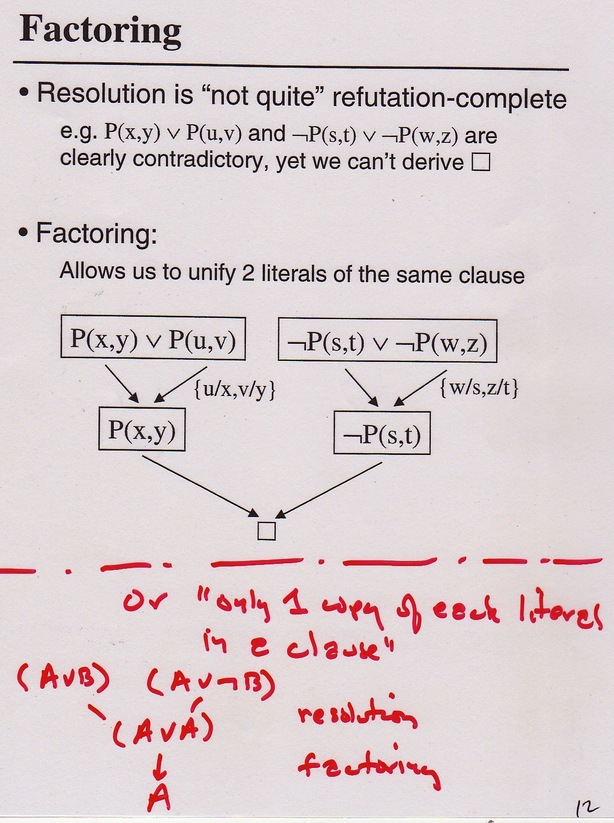

Extending FOL 1: Factoring

Suppose we have this KB:

1. P(x,y) ∨ P(u,v)

2. (∼ P(s,t)) ∨ (∼ P(w,z))

Clearly 1. asserts the same thing twice, and 2. asserts its

opposite twice. Must have a contradiction, so should be able

to derive ∅. BUT... resolve the two clauses,

say with {x\s, y\t}, and we get

P(u,v) ∨ ∼ P(w,z), which is obviously TRUE. Oops.

So we need yet another inference rule: FOL factoring.

Typical Factoring Example (Len Schubert)

P(y,x,A) ∨ P(F(y),z,z) ∨ P(F(A),B,x)

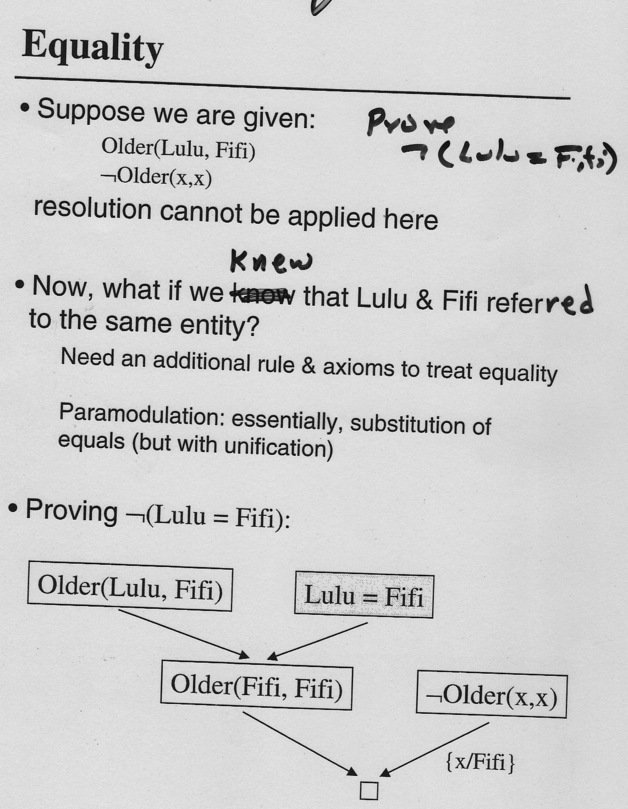

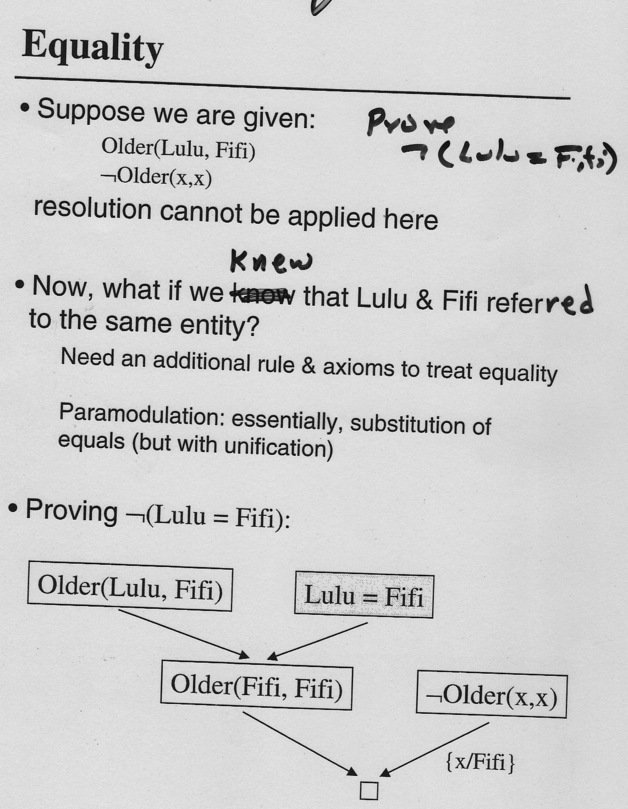

Extending FOL 2: Using Equality

FOL not "equality friendly", so a raft of techniques, usually with

"modulation" in their name for some reason, are tacked on.

Extending FOL 3, 4: Modal and Non-Monotonic Logics

Problem 1:

1. I believe I'm seeing the morning star (which BTW is usually Venus).

2. It's true whether I know it or not that this month the morning star

is Mercury.

3. Thus I must believe I'm seeing Mercury. (with usual "equality"

axioms as above.)

That's just not true. We need "modal" logical operators that are

axiom sets designed to deal with the elusive nature of belief (and

lots of other phenomena). Nixon the

war-monger

was a

Quaker, for instance: people are quite comfy living with totally incompatible

beliefs. As the Red Queen says:

" Why, sometimes I've believed as many as six impossible things before

breakfast."

Problem 2:

I head out CSB to the airport and it turns out the Elmwood St. Bridge

is closed. I'm surprised and discombobulated: It seems

I had assumed something that wasn't true. In FOL we

don't have assumptions, or any sort of "mistaken fact", but

in real life we need them.

We'd like to "take back" "wrong facts" and their related conclusions,

and re-reason. FOL as we know it

is monotonic (KB is true, all facts inferred from it are

entailed by it, period!). So we need a non-monotonic logic, and

this brings up cool ideas like default logic, which can use

"default fact" that all birds fly, but lets us deal with the fact that

Tweety (the bird) doesn't fly once we're told she's

a penguin. A related complex technique in logic, not so cool IMO,

is circumscription.

In the world of AI Planning, this sort of thing is easily implemented

with a different inference and knowledge-representation system

(early, canonical STRIPS system). Sussman invented the Scheme

language largely to get its "continuation" mechanism. He then wrote

CONNIVER (thesis), a successor to Hewitt's PLANNER (thesis), to allow 'teleporting' out

of states that are found to be based on bad facts and full of false

conclusions, over to other lines of reasoning free of those mistakes.

BTW, PLANNER pretty much introduced today's idea of methods, calling them

"actors".